k8s-Spinnaker部署实践

作者:互联网

云计算基本概念

什么是云计算

对云计算的定义有多种说法。对于到底什么是云计算,至少可以找到100种解释。云计算是基于互联网的相关服务的增加、使用和交付模式,通常涉及通过互联网来提供动态易扩展且经常是虚拟化的资源。云是网络、互联网的一种比喻说法。因此,云计算甚至可以让你体验每秒10万亿次的运算能力,拥有这么强大的计算能力可以模拟核爆炸、预测气候变化和市场发展趋势。用户通过电脑、笔记本、手机等方式接入数据中心,按自己的需求进行运算。

现阶段广为接受的是美国国家标准与技术研究院定义:云计算是一种按使用量付费的模式,这种模式提供可用的、便捷的、按需的网络访问, 进入可配置的计算资源共享池(资源包括网络,服务器,存储,应用软件,服务),这些资源能够被快速提供,只需投入很少的管理工作,或与服务供应商进行很少的交互。

中国网格计算、云计算专家刘鹏给出如下定义 :“云计算将计算任务分布在大量计算机构成的资源池上,使各种应用系统能够根据需要获取计算力、存储空间和各种软件服务”。

狭义的云计算指的是厂商通过分布式计算和虚拟化技术搭建数据中心或超级计算机,以免费或按需租用方式向技术开发者或者企业客户提供数据存储、分析以及科学计算等服务。 广义的云计算指厂商通过建立网络服务器集群,向各种不同类型客户提供在线软件服务、硬件租借、数据存储、计算分析等不同类型的服务。

通俗的理解是,云计算的“云“就是存在于互联网上的服务器集群上的资源,它包括硬件资源(服务器、存储器、CPU等)和软件资源(如应用软件、集成开发环境等),本地计算机只需要通过互联网发送一个需求信息,远端就会有成千上万的计算机为你提供需要的资源并将结果返回到本地计算机,这样,本地计算机几乎不需要做什么,所有的处理都在云计算提供商所提供的计算机群来完成。

云计算原理

通过使计算分布在大量的分布式计算机上,而非本地计算机或远程服务器中,企业数据中心的运行将与互联网更相似。这使得企业能够将资源切换到需要的应用上,根据需求访问计算机和存储系统。

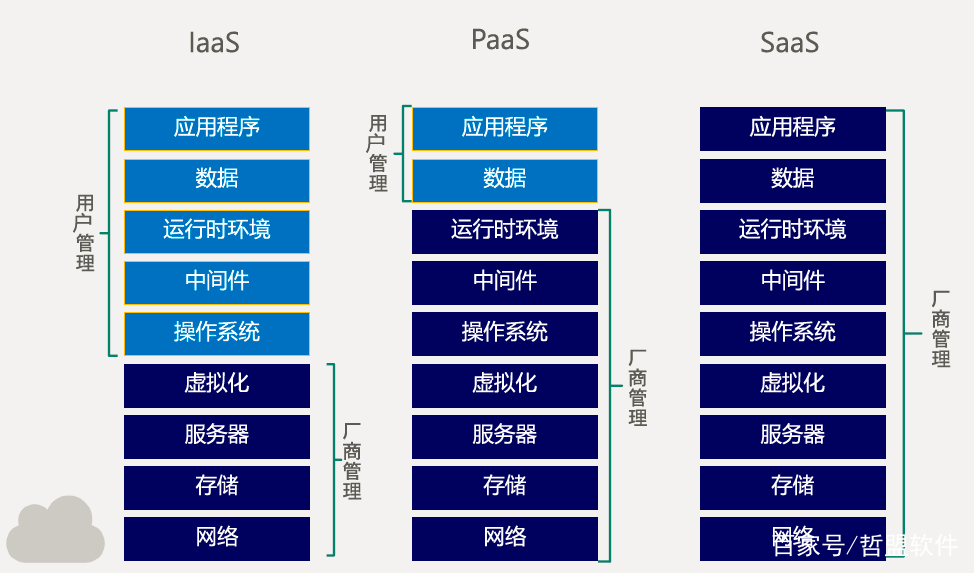

云计算的三个服务模式

公众认可的云计算的三个服务模式——IaaS、PaaS、SaaS 。

此图片百度找的 能看懂就行

(1)、IaaS

IaaS(Infrastructure-as-a- Service):基础设施即服务。消费者通过Internet可以从完善的计算机基础设施获得服务。

(2)、PaaS

PaaS(Platform-as-a- Service):平台即服务。PaaS实际上是指将软件研发的平台作为一种服务,以SaaS的模式提交给用户。因此,PaaS也是SaaS模式的一种应用。但是,PaaS的出现可以加快SaaS的发展,尤其是加快SaaS应用的开发速度。

(3)、SaaS

SaaS(Software-as-a- Service):软件即服务。它是一种通过Internet提供软件的模式,用户无需购买软件,而是向提供商租用基于Web的软件,来管理企业经营活动。相对于传统的软件,SaaS解决方案有明显的优势,包括较低的前期成本,便于维护,快速展开使用等。

云计算的核心技术

云计算系统运用了许多技术,其中以编程模型、数据管理技术、数据存储技术、虚拟化技术、云计算平台管理技术最为关键。

(1)编程模型

MapReduce是Google开发的java、Python、C++编程模型,它是一种简化的分布式编程模型和高效的任务调度模型,用于大规模数据集(大于1TB)的并行运算。严格的编程模型使云计算环境下的编程十分简单。

(2) 海量数据分布存储技术

云计算系统由大量服务器组成,同时为大量用户服务,因此云计算系统采用分布式存储的方式存储数据,用冗余存储的方式保证数据的可靠性。云计算系统中广泛使用的数据存储系统是Google的GFS和Hadoop团队开发的GFS的开源实现HDFS。

(3) 海量数据管理技术

云计算需要对分布的、海量的数据进行处理、分析,因此,数据管理技术必需能够高效的管理大量的数据。云计算系统中的数据管理技术主要是Google的BT(BigTable)数据管理技术和Hadoop团队开发的开源数据管理模块HBase。

(4)虚拟化技术

通过虚拟化技术可实现软件应用与底层硬件相隔离,它包括将单个资源划分成多个虚拟资源的裂分模式,也包括将多个资源整合成一个虚拟资源的聚合模式。虚拟化技术根据对象可分成存储虚拟化、计算虚拟化、网络虚拟化等,计算虚拟化又分为系统级虚拟化、应用级虚拟化和桌面虚拟化。

(5)云计算平台管理技术

云计算资源规模庞大,服务器数量众多并分布在不同的地点,同时运行着数百种应用,如何有效的管理这些服务器,保证整个系统提供不间断的服务是巨大的挑战。云计算系统的平台管理技术能够使大量的服务器协同工作,方便的进行业务部署和开通,快速发现和恢复系统故障,通过自动化、智能化的手段实现大规模系统的可靠运营。

Kubernetes 是什么?

此页面是 Kubernetes 的概述。

Kubernetes 是一个可移植的、可扩展的开源平台,用于管理容器化的工作负载和服务,可促进声明式配置和自动化。 Kubernetes 拥有一个庞大且快速增长的生态系统。Kubernetes 的服务、支持和工具广泛可用。

名称 Kubernetes 源于希腊语,意为“舵手”或“飞行员”。Google 在 2014 年开源了 Kubernetes 项目。 Kubernetes 建立在 Google 在大规模运行生产工作负载方面拥有十几年的经验 的基础上,结合了社区中最好的想法和实践。

时光回溯

让我们回顾一下为什么 Kubernetes 如此有用。

传统部署时代:

早期,组织在物理服务器上运行应用程序。无法为物理服务器中的应用程序定义资源边界,这会导致资源分配问题。 例如,如果在物理服务器上运行多个应用程序,则可能会出现一个应用程序占用大部分资源的情况, 结果可能导致其他应用程序的性能下降。 一种解决方案是在不同的物理服务器上运行每个应用程序,但是由于资源利用不足而无法扩展, 并且组织维护许多物理服务器的成本很高。

虚拟化部署时代:

作为解决方案,引入了虚拟化。虚拟化技术允许你在单个物理服务器的 CPU 上运行多个虚拟机(VM)。 虚拟化允许应用程序在 VM 之间隔离,并提供一定程度的安全,因为一个应用程序的信息 不能被另一应用程序随意访问。

虚拟化技术能够更好地利用物理服务器上的资源,并且因为可轻松地添加或更新应用程序 而可以实现更好的可伸缩性,降低硬件成本等等。

每个 VM 是一台完整的计算机,在虚拟化硬件之上运行所有组件,包括其自己的操作系统。

容器部署时代:

容器类似于 VM,但是它们具有被放宽的隔离属性,可以在应用程序之间共享操作系统(OS)。 因此,容器被认为是轻量级的。容器与 VM 类似,具有自己的文件系统、CPU、内存、进程空间等。 由于它们与基础架构分离,因此可以跨云和 OS 发行版本进行移植。

容器因具有许多优势而变得流行起来。下面列出的是容器的一些好处:

- 敏捷应用程序的创建和部署:与使用 VM 镜像相比,提高了容器镜像创建的简便性和效率。

- 持续开发、集成和部署:通过快速简单的回滚(由于镜像不可变性),支持可靠且频繁的 容器镜像构建和部署。

- 关注开发与运维的分离:在构建/发布时而不是在部署时创建应用程序容器镜像, 从而将应用程序与基础架构分离。

- 可观察性不仅可以显示操作系统级别的信息和指标,还可以显示应用程序的运行状况和其他指标信号。

- 跨开发、测试和生产的环境一致性:在便携式计算机上与在云中相同地运行。

- 跨云和操作系统发行版本的可移植性:可在 Ubuntu、RHEL、CoreOS、本地、 Google Kubernetes Engine 和其他任何地方运行。

- 以应用程序为中心的管理:提高抽象级别,从在虚拟硬件上运行 OS 到使用逻辑资源在 OS 上运行应用程序。

- 松散耦合、分布式、弹性、解放的微服务:应用程序被分解成较小的独立部分, 并且可以动态部署和管理 - 而不是在一台大型单机上整体运行。

- 资源隔离:可预测的应用程序性能。

- 资源利用:高效率和高密度。

为什么需要 Kubernetes,它能做什么?

容器是打包和运行应用程序的好方式。在生产环境中,你需要管理运行应用程序的容器,并确保不会停机。 例如,如果一个容器发生故障,则需要启动另一个容器。如果系统处理此行为,会不会更容易?

这就是 Kubernetes 来解决这些问题的方法! Kubernetes 为你提供了一个可弹性运行分布式系统的框架。 Kubernetes 会满足你的扩展要求、故障转移、部署模式等。 例如,Kubernetes 可以轻松管理系统的 Canary 部署。

Kubernetes 为你提供:

-

服务发现和负载均衡

Kubernetes 可以使用 DNS 名称或自己的 IP 地址公开容器,如果进入容器的流量很大, Kubernetes 可以负载均衡并分配网络流量,从而使部署稳定。

-

存储编排

Kubernetes 允许你自动挂载你选择的存储系统,例如本地存储、公共云提供商等。

-

自动部署和回滚

你可以使用 Kubernetes 描述已部署容器的所需状态,它可以以受控的速率将实际状态 更改为期望状态。例如,你可以自动化 Kubernetes 来为你的部署创建新容器, 删除现有容器并将它们的所有资源用于新容器。

-

自动完成装箱计算

Kubernetes 允许你指定每个容器所需 CPU 和内存(RAM)。 当容器指定了资源请求时,Kubernetes 可以做出更好的决策来管理容器的资源。

-

自我修复

Kubernetes 重新启动失败的容器、替换容器、杀死不响应用户定义的 运行状况检查的容器,并且在准备好服务之前不将其通告给客户端。

-

密钥与配置管理

Kubernetes 允许你存储和管理敏感信息,例如密码、OAuth 令牌和 ssh 密钥。 你可以在不重建容器镜像的情况下部署和更新密钥和应用程序配置,也无需在堆栈配置中暴露密钥。

Kubernetes 不是什么?

Kubernetes 不是传统的、包罗万象的 PaaS(平台即服务)系统。 由于 Kubernetes 在容器级别而不是在硬件级别运行,它提供了 PaaS 产品共有的一些普遍适用的功能, 例如部署、扩展、负载均衡、日志记录和监视。 但是,Kubernetes 不是单体系统,默认解决方案都是可选和可插拔的。 Kubernetes 提供了构建开发人员平台的基础,但是在重要的地方保留了用户的选择和灵活性。

Kubernetes:

-

不限制支持的应用程序类型。 Kubernetes 旨在支持极其多种多样的工作负载,包括无状态、有状态和数据处理工作负载。 如果应用程序可以在容器中运行,那么它应该可以在 Kubernetes 上很好地运行。

-

不部署源代码,也不构建你的应用程序。 持续集成(CI)、交付和部署(CI/CD)工作流取决于组织的文化和偏好以及技术要求。

-

不提供应用程序级别的服务作为内置服务,例如中间件(例如,消息中间件)、 数据处理框架(例如,Spark)、数据库(例如,mysql)、缓存、集群存储系统 (例如,Ceph)。这样的组件可以在 Kubernetes 上运行,并且/或者可以由运行在 Kubernetes 上的应用程序通过可移植机制(例如, 开放服务代理)来访问。

-

不要求日志记录、监视或警报解决方案。 它提供了一些集成作为概念证明,并提供了收集和导出指标的机制。

-

不提供或不要求配置语言/系统(例如 jsonnet),它提供了声明性 API, 该声明性 API 可以由任意形式的声明性规范所构成。

-

不提供也不采用任何全面的机器配置、维护、管理或自我修复系统。

-

此外,Kubernetes 不仅仅是一个编排系统,实际上它消除了编排的需要。 编排的技术定义是执行已定义的工作流程:首先执行 A,然后执行 B,再执行 C。 相比之下,Kubernetes 包含一组独立的、可组合的控制过程, 这些过程连续地将当前状态驱动到所提供的所需状态。 如何从 A 到 C 的方式无关紧要,也不需要集中控制,这使得系统更易于使用 且功能更强大、系统更健壮、更为弹性和可扩展。

Kubernetes PAAS平台介绍

-

越来越多的云计算厂商,正在基于k8s构建PaaS平台

-

获得PaaS能力的几个必要条件:

-

统一应用的运行时环境( docker)

-

有laaS能力(K8S)

-

有可靠的中间件集群、数据库集群(DBA的主要工作)

-

有分布式存储集群(存储工程师的主要工作)

-

有适配的监控、日志系统( Prometheus、ELK)

-

有完善的C、CD系统( elkins、?)

-

-

常见的基于K8S的CD系统

- 自研

- Argo CD

- Open Shift

- Spinnaker

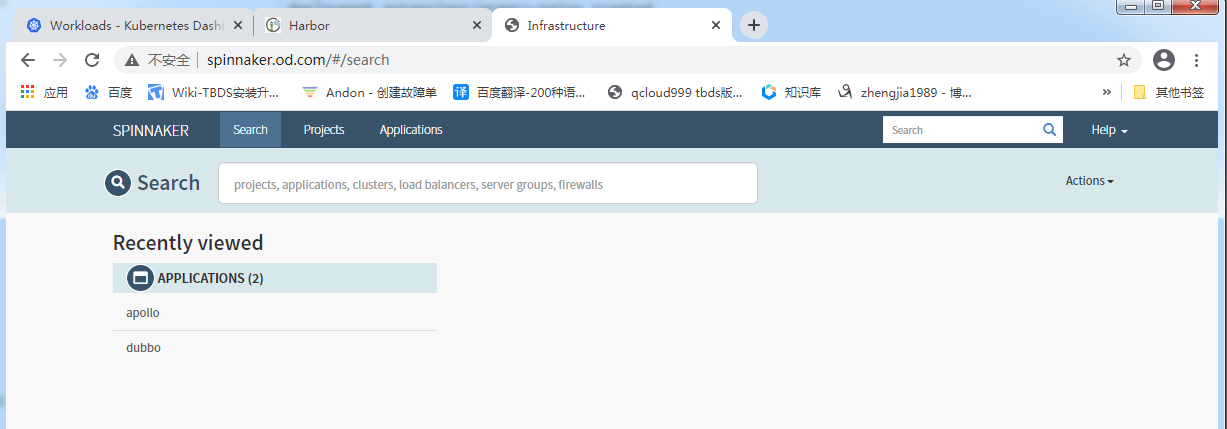

Spinnaker 介绍

功能:

无论目标环境如何,Spinnaker 部署优势始终如一,它的功能如下:

通过灵活和可配置 Pipelines,实现可重复的自动化部署;

提供所有环境的全局视图,可随时查看应用程序在其部署 Pipeline 的状态;

通过一致且可靠的 API ,提供可编程配置;

易于配置、维护和扩展;

具有操作伸缩性;

兼容 Asgard 特性,原用户不用迁移。

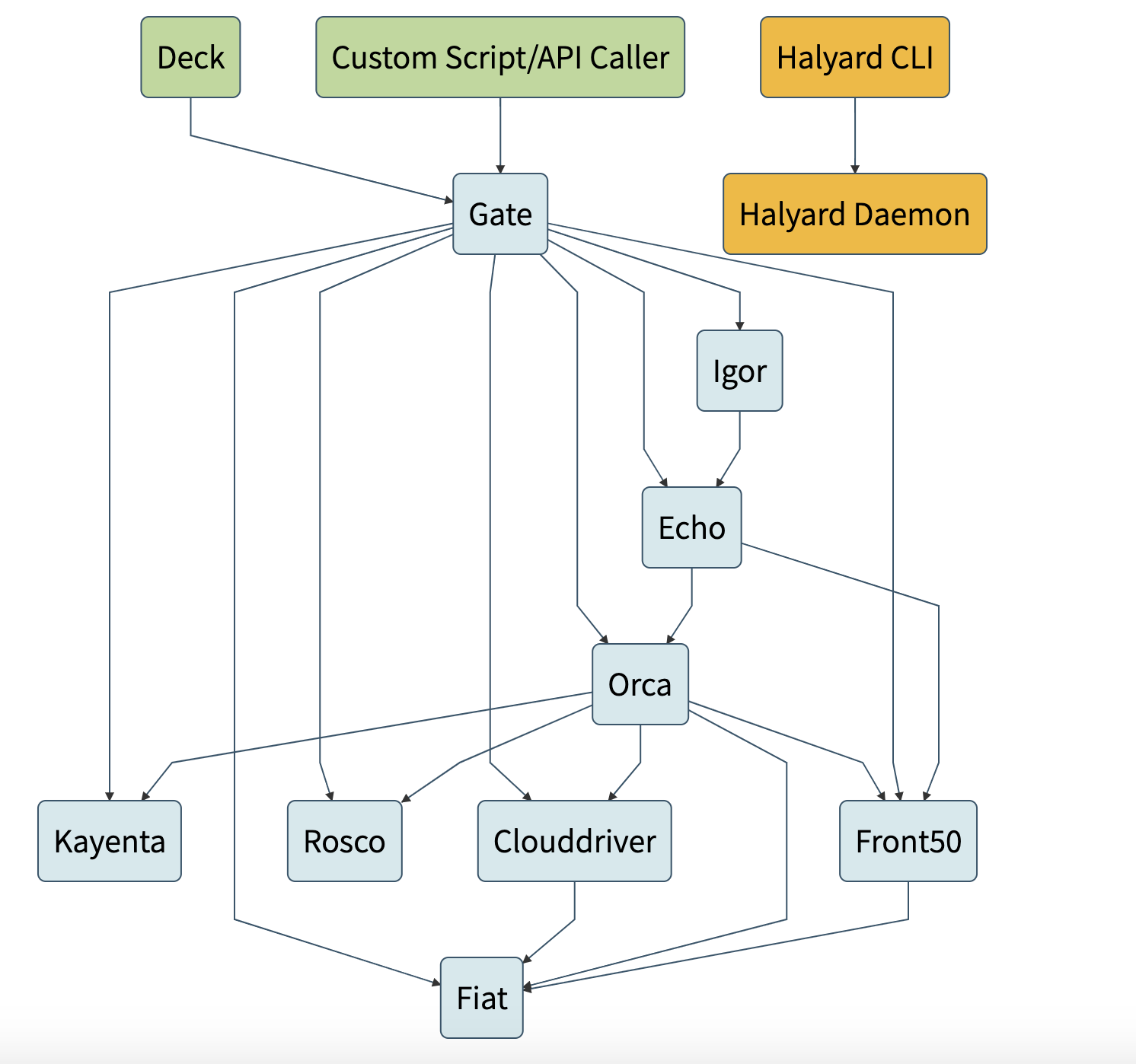

架构:

Deck:面向用户 UI 界面组件,提供直观简介的操作界面,可视化操作发布部署流程。

- API: 面向调用 API 组件,我们可以不使用提供的 UI,直接调用 API 操作,由它后台帮我们执行发布等任务。

- Gate:是 API 的网关组件,可以理解为代理,所有请求由其代理转发。

- Rosco:是构建 beta 镜像的组件,需要配置 Packer 组件使用。

- Orca:是核心流程引擎组件,用来管理流程。

- Igor:是用来集成其他 CI 系统组件,如 Jenkins 等一个组件。

- Echo:是通知系统组件,发送邮件等信息。

- Front50:是存储管理组件,需要配置 Redis、Cassandra 等组件使用。

- Cloud driver 是它用来适配不同的云平台的组件,比如 Kubernetes,Google、AWS EC2、Microsoft Azure 等。

- Fiat 是鉴权的组件,配置权限管理,支持 OAuth、SAML、LDAP、GitHub teams、Azure groups、 Google Groups 等。

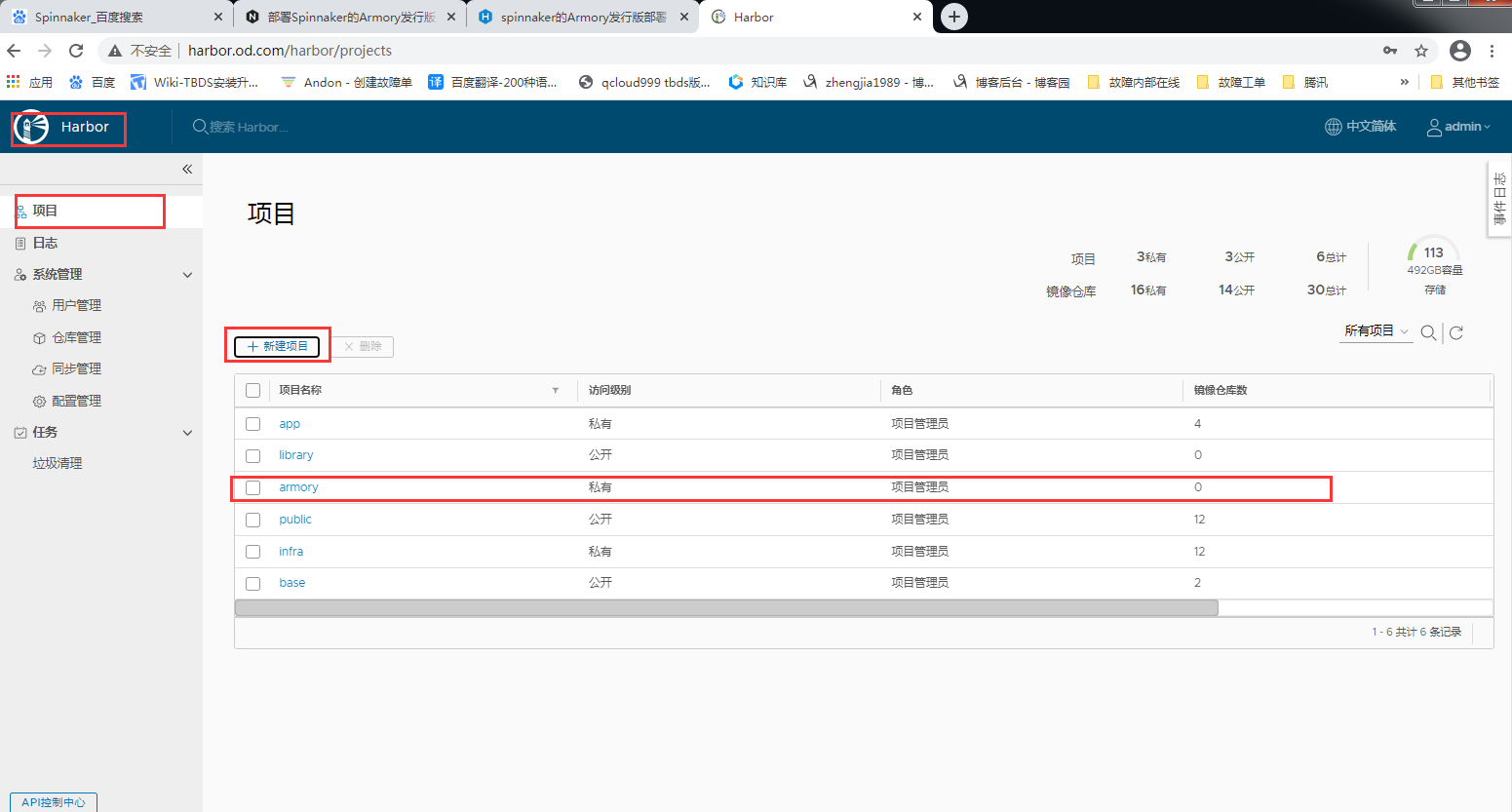

Spinnaker的Armory发行版部署

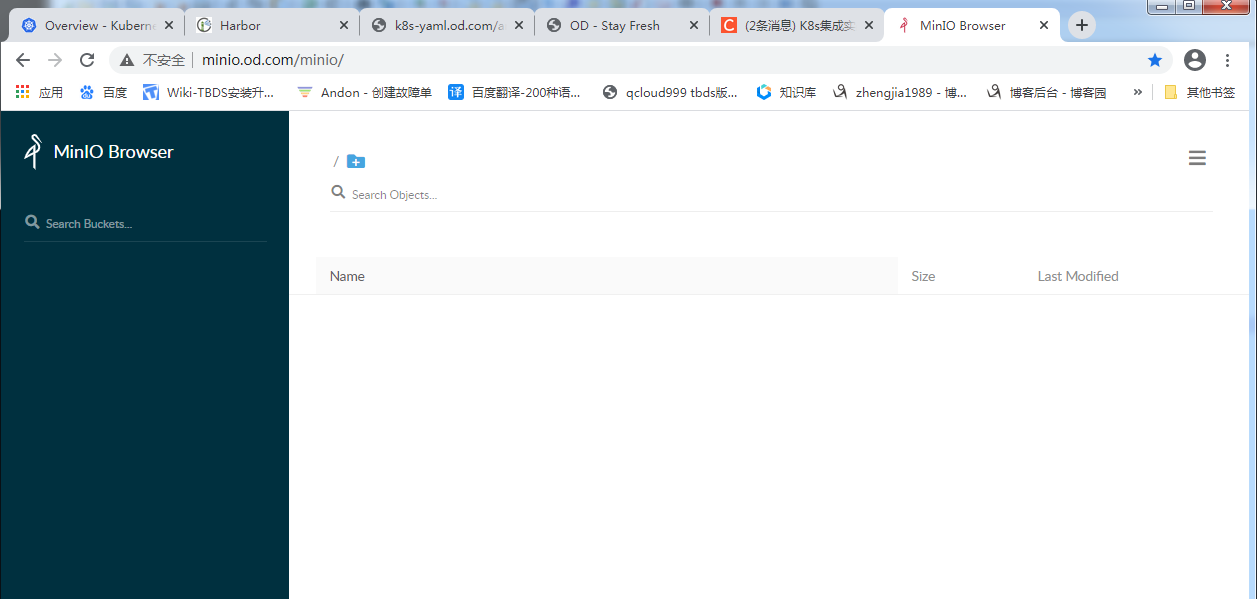

minio部署对象式存储组件

创建harbor仓库 armory 并创建secret

[root@k8s-3 ~]# kubectl create ns armory

namespace/armory created

[root@k8s-3 ~]# kubectl create secret docker-registry harbor --docker-server=harbor.od.com --docker-username=admin --docker-password=Harbor12345 -n armory

secret/harbor created

准备镜像

[root@k8s-5 ~]# docker pull minio/minio:latest

[root@k8s-5 ~]# docker images|grep minio

minio/minio latest 6c897135cbab 9 days ago 185MB

[root@k8s-5 ~]# docker tag 6c897135cbab harbor.od.com/armory/minio:latest

[root@k8s-5 ~]# docker push harbor.od.com/armory/minio:latest

创建目录

[root@k8s-5 ~]# mkdir -p /data/k8s-yaml/armory/minio

[root@k8s-5 ~]# cd /data/k8s-yaml/armory/minio

准备资源配置清单

[root@k8s-5 minio]# pwd

/data/k8s-yaml/armory/minio

[root@k8s-5 minio]# vim deployment.yaml

cat > deployment.yaml << 'EOF'

kind: Deployment

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

name: minio

name: minio

namespace: armory

spec:

progressDeadlineSeconds: 600

replicas: 1

revisionHistoryLimit: 7

selector:

matchLabels:

name: minio

template:

metadata:

labels:

app: minio

name: minio

spec:

containers:

- name: minio

image: harbor.od.com/armory/minio:latest

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9000

protocol: TCP

args:

- server

- /data

env:

- name: MINIO_ACCESS_KEY

value: admin

- name: MINIO_SECRET_KEY

value: admin123

readinessProbe:

failureThreshold: 3

httpGet:

path: /minio/health/ready

port: 9000

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 5

volumeMounts:

- mountPath: /data

name: data

imagePullSecrets:

- name: harbor

volumes:

- nfs:

server: k8s-5.host.com

path: /data/nfs-volume/minio

name: data

EOF

[root@k8s-5 minio]# vim service.yaml

[root@k8s-5 minio]# cat >service.yaml <<'EOF'

apiVersion: v1

kind: Service

metadata:

name: minio

namespace: armory

spec:

ports:

- port: 80

protocol: TCP

targetPort: 9000

selector:

app: minio

EOF

[root@k8s-5 minio]# vim ingress.yaml

[root@k8s-5 minio]# cat >ingress.yaml <<'EOF'

kind: Ingress

apiVersion: extensions/v1beta1

metadata:

name: minio

namespace: armory

spec:

rules:

- host: minio.od.com

http:

paths:

- path: /

backend:

serviceName: minio

servicePort: 80

EOF

创建nfs目录

[root@k8s-5 minio]# mkdir /data/nfs-volume/minio

应用资源配置清单

[root@k8s-3 ~]# kubectl apply -f http://k8s-yaml.od.com/armory/minio/deployment.yaml

deployment.apps/minio created

[root@k8s-3 ~]# kubectl apply -f http://k8s-yaml.od.com/armory/minio/service.yaml

service/minio created

[root@k8s-3 ~]# kubectl apply -f http://k8s-yaml.od.com/armory/minio/ingress.yaml

ingress.extensions/minio created

DNS解析

[root@k8s-1 ~]# vim /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019111031 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 192.168.50.215

minio A 192.168.50.10

[root@k8s-1 ~]# systemctl restart named

[root@k8s-1 ~]# dig -t A minio.od.com +short

192.168.50.10

[root@k8s-1 ~]# dig -t A minio.od.com +short @192.168.50.215

192.168.50.10

Web访问

admin/admin123 [在deployment.yaml的清单里配置]

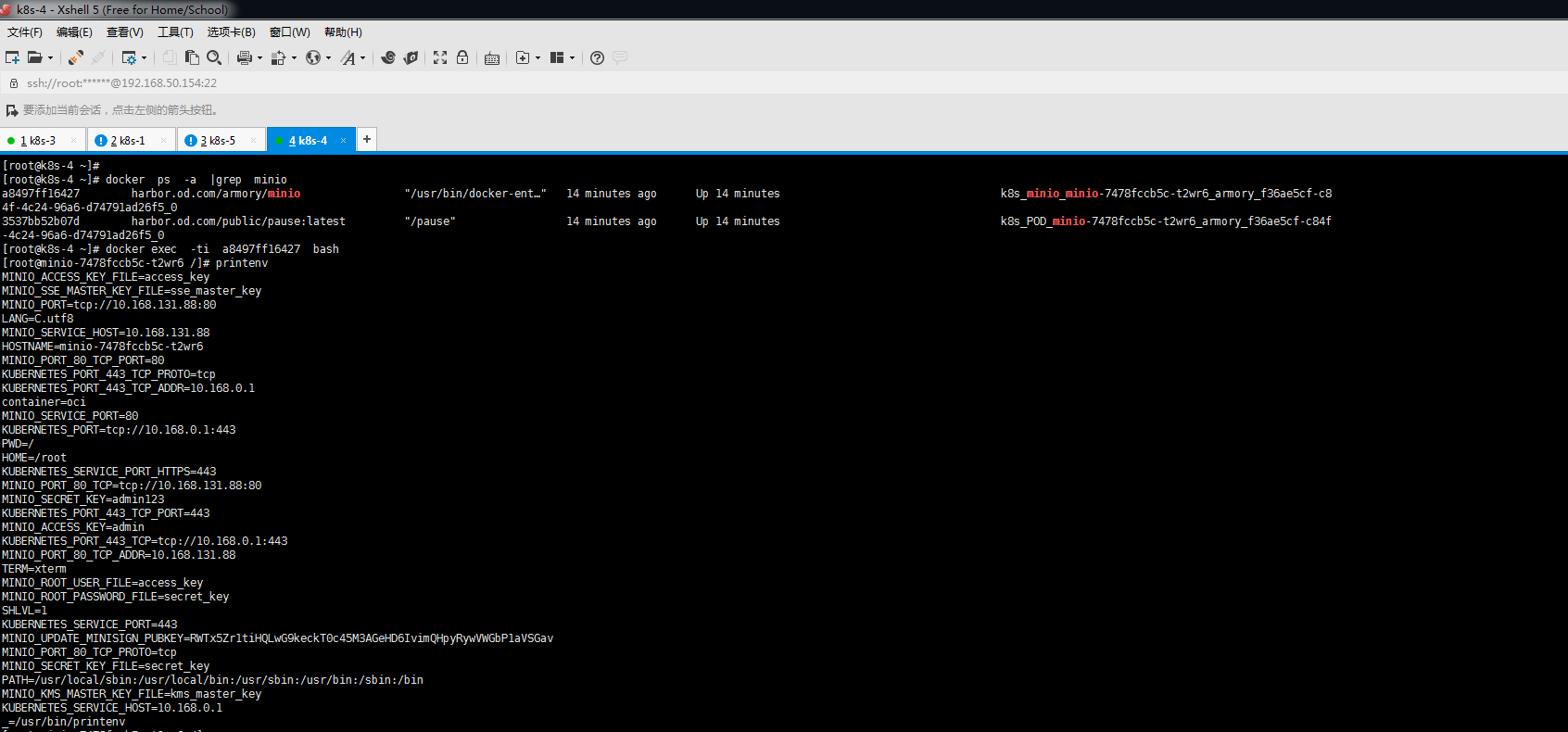

进入容器

redis部署缓存组件

准备镜像

[root@k8s-5 ~]# docker pull redis:4.0.14

[root@k8s-5 ~]# docker images | grep redis

redis 4.0.14 191c4017dcdd 9 months ago 89.3MB

goharbor/redis-photon v1.8.3 cda8fa1932ec 16 months ago 109MB

[root@k8s-5 ~]# docker tag 191c4017dcdd harbor.od.com/armory/redis:v4.0.14

[root@k8s-5 ~]# docker push harbor.od.com/armory/redis:v4.0.14

创建目录

[root@k8s-5 ~]# mkdir -p /data/k8s-yaml/armory/redis

[root@k8s-5 ~]# cd /data/k8s-yaml/armory/redis

准备资源配置清单

在k8s-5运算节点执行

[root@k8s-5 redis]# vim deployment.yaml

[root@k8s-5 redis]# cat >deployment.yaml <<'EOF'

kind: Deployment

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

labels:

name: redis

name: redis

namespace: armory

spec:

replicas: 1

revisionHistoryLimit: 7

selector:

matchLabels:

name: redis

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 1

type: RollingUpdate

template:

metadata:

labels:

app: redis

name: redis

spec:

containers:

- name: redis

image: harbor.od.com/armory/redis:v4.0.14

imagePullPolicy: IfNotPresent

ports:

- containerPort: 6379

protocol: TCP

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

imagePullSecrets:

- name: harbor

restartPolicy: Always

schedulerName: default-scheduler

terminationGracePeriodSeconds: 30

EOF

[root@k8s-5 redis]# vim service.yaml

[root@k8s-5 redis]# cat > service.yaml <<'EOF'

apiVersion: v1

kind: Service

metadata:

name: redis

namespace: armory

spec:

ports:

- port: 6379

protocol: TCP

targetPort: 6379

selector:

app: redis

EOF

应用资源配置清单

[root@k8s-3 ~]# kubectl apply -f http://k8s-yaml.od.com/armory/redis/deployment.yaml

deployment.extensions/redis created

[root@k8s-3 ~]# kubectl apply -f http://k8s-yaml.od.com/armory/redis/service.yaml

service/redis created

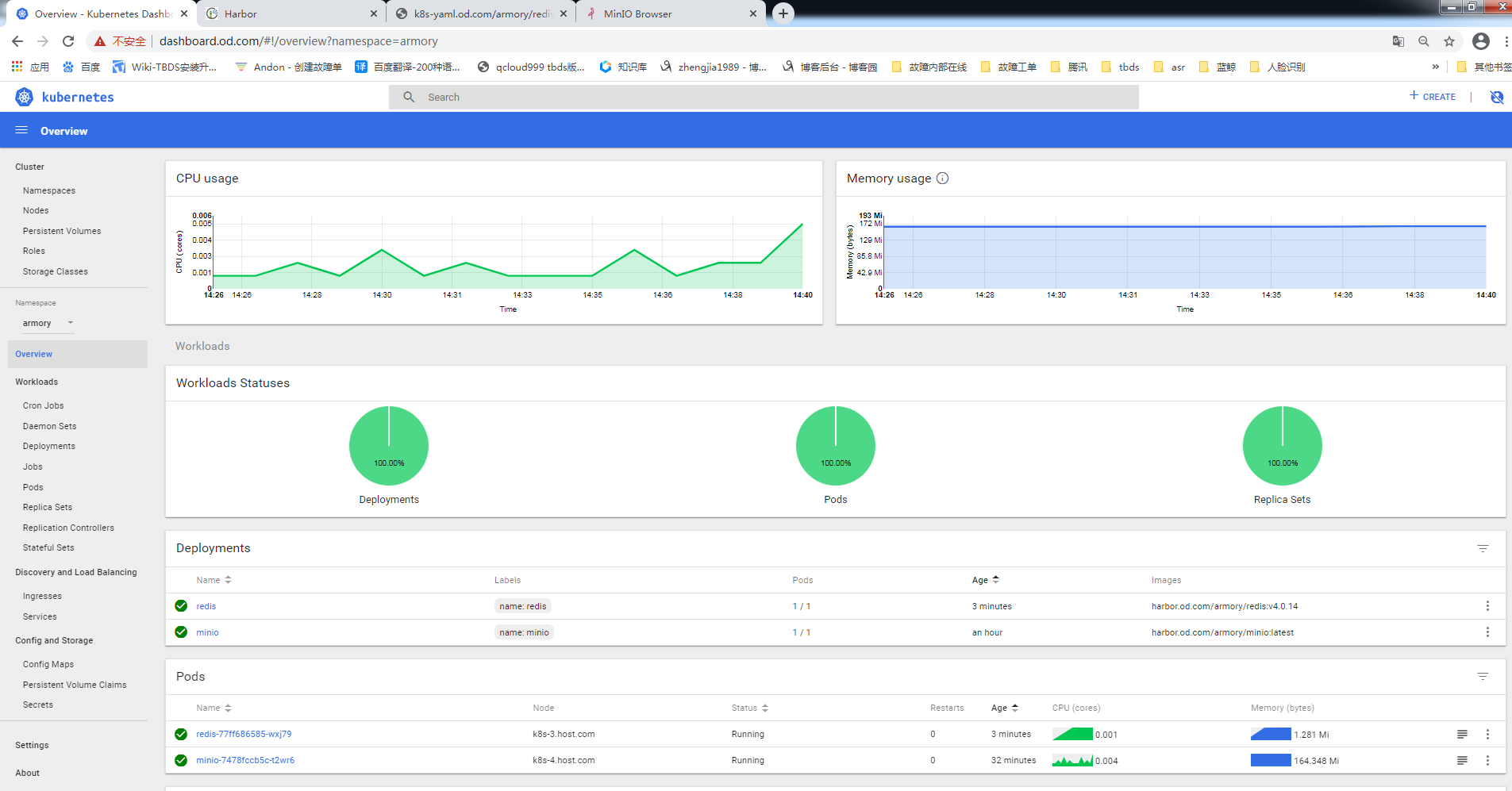

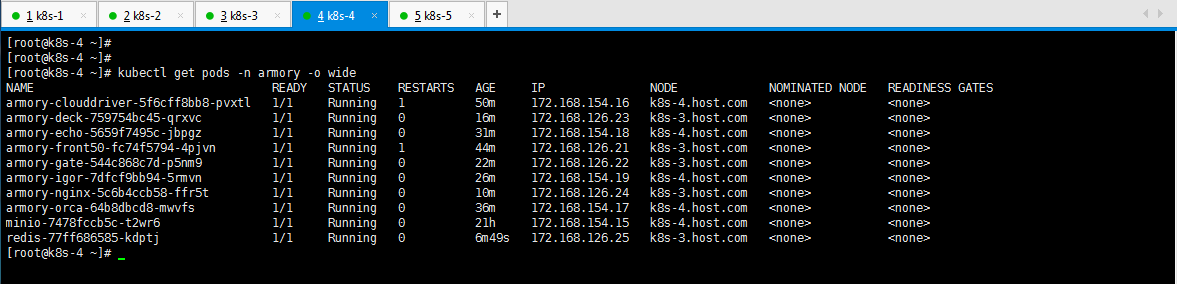

查看pod状态

[root@k8s-3 ~]# kubectl get pods -n armory

NAME READY STATUS RESTARTS AGE

minio-7478fccb5c-t2wr6 1/1 Running 0 30m

redis-77ff686585-wxj79 1/1 Running 0 2m10s

CloudDriver部署驱动组件

准备镜像

[root@k8s-5 ~]# docker pull armory/spinnaker-clouddriver-slim:release-1.8.x-14c9664

[root@k8s-5 ~]# docker images | grep clouddriver

armory/spinnaker-clouddriver-slim release-1.8.x-14c9664 edb2507fdb62 2 years ago 662MB

[root@k8s-5 ~]# docker tag edb2507fdb62 harbor.od.com/armory/clouddriver:v1.8.x

[root@k8s-5 ~]# docker push harbor.od.com/armory/clouddriver:v1.8.x

创建目录

[root@hdss7-200 ~]# mkdir /data/k8s-yaml/armory/clouddriver

[root@hdss7-200 ~]# cd /data/k8s-yaml/armory/clouddriver

[root@k8s-5 clouddriver]# cat > credentials <<'EOF'

[default]

aws_access_key_id=admin

aws_secret_access_key=admin123

EOF

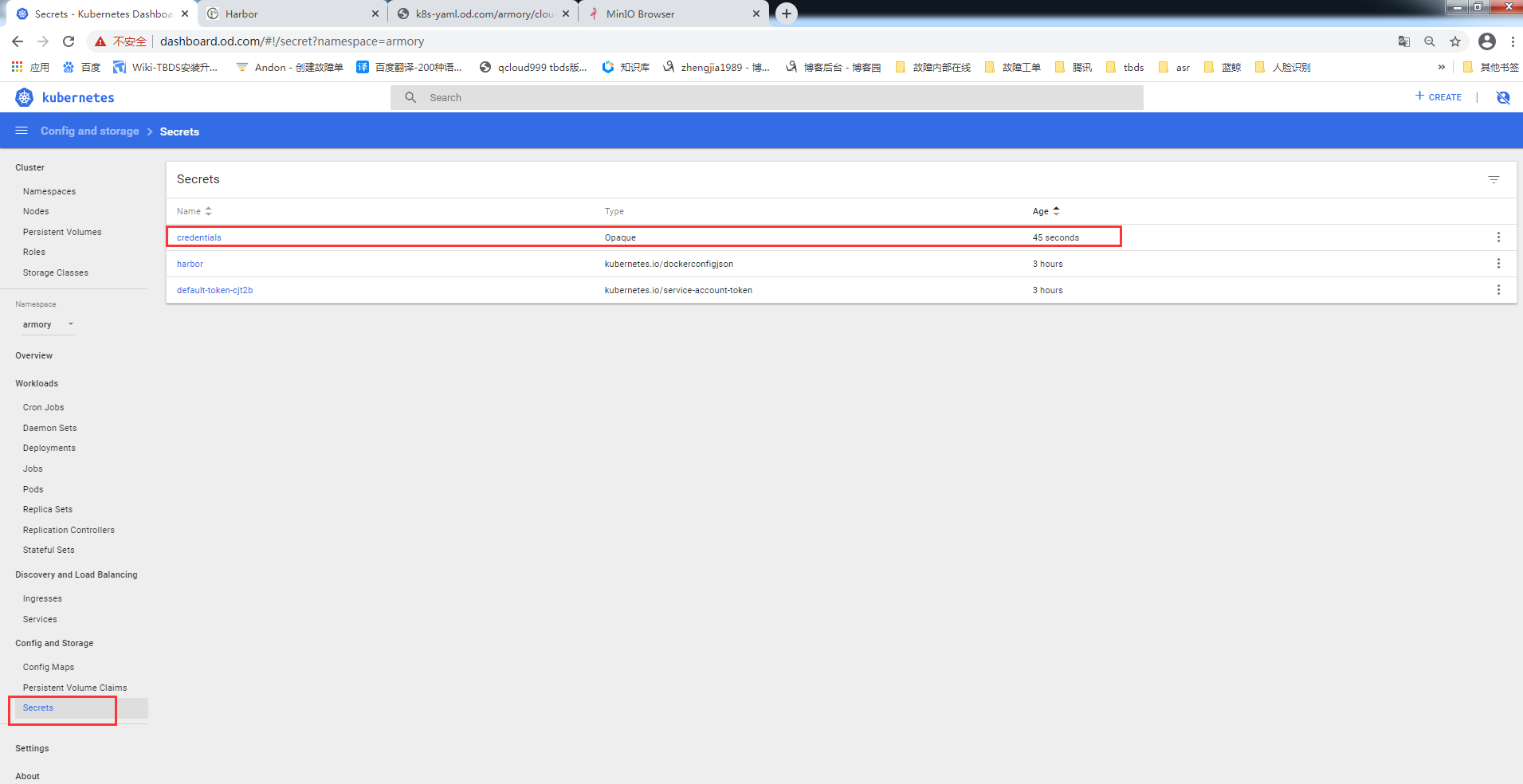

创建secret (任意计算节点执行)

[root@k8s-3 ~]# wget http://k8s-yaml.od.com/armory/clouddriver/credentials

[root@k8s-3 ~]# kubectl create secret generic credentials --from-file=./credentials -n armory

secret/credentials created

准备k8s用户配置/签发证书与私钥

[root@k8s-5 certs]# cd /opt/certs

[root@k8s-5 certs]# vim admin-csr.json

{

"CN": "cluster-admin",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "qianyan",

"OU": "ops"

}

]

}

[root@k8s-5 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client admin-csr.json |cfssl-json -bare admin

拷贝证书

[root@k8s-3 ~]# scp k8s-5:/opt/certs/admin*pem .

[root@k8s-3 ~]# scp k8s-5:/opt/certs/ca.pem .

创建用户

[root@k8s-3 ~]# kubectl config set-cluster myk8s --certificate-authority=./ca.pem --embed-certs=true --server=https://192.168.50.10:7443 --kubeconfig=config

[root@k8s-3 ~]# kubectl config set-credentials cluster-admin --client-certificate=./admin.pem --client-key=./admin-key.pem --embed-certs=true --kubeconfig=configUser

[root@k8s-3 ~]# kubectl config set-context myk8s-context --cluster=myk8s --user=cluster-admin --kubeconfig=config

[root@k8s-3 ~]# kubectl config use-context myk8s-context --kubeconfig=config

[root@k8s-3 ~]# kubectl create clusterrolebinding myk8s-admin --clusterrole=cluster-admin --user=cluster-admin

本地测试

[root@k8s-3 ~]# cd /root/.kube/

[root@k8s-3 .kube]# cp /root/config .

[root@k8s-3 .kube]# ll

total 16

drwxr-x--- 3 root root 4096 Dec 2 11:13 cache

-rw------- 1 root root 6229 Jan 26 15:41 config

drwxr-x--- 3 root root 4096 Jan 26 15:25 http-cache

[root@k8s-3 .kube]# kubectl config view

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: DATA+OMITTED

server: https://192.168.50.10:7443

name: myk8s

contexts:

- context:

cluster: myk8s

user: cluster-admin

name: myk8s-context

current-context: myk8s-context

kind: Config

preferences: {}

users:

- name: cluster-admin

user:

client-certificate-data: REDACTED

client-key-data: REDACTED

验证cluster-admin用户

[root@k8s-5 certs]# mkdir /root/.kube

[root@k8s-5 certs]# cd /root/.kube

[root@k8s-5 .kube]# scp k8s-3:/root/config .

[root@k8s-5 .kube]# scp k8s-3:/opt/kubernetes/server/bin/kubectl /usr/bin/kubectl

[root@k8s-5 .kube]# kubectl get pods -n armory

NAME READY STATUS RESTARTS AGE

minio-7478fccb5c-t2wr6 1/1 Running 0 83m

redis-77ff686585-wxj79 1/1 Running 0 55m

[root@k8s-5 .kube]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-3.host.com Ready master,node 46d v1.15.4

k8s-4.host.com Ready master,node 55d v1.15.4

[root@k8s-5 .kube]# kubectl get pods -n infra

NAME READY STATUS RESTARTS AGE

alertmanager-5d46bdc7b4-6j54m 1/1 Running 0 19d

apollo-adminservice-58fcf84574-cmx9l 1/1 Running 0 10d

apollo-configservice-7f587896f8-sx8cx 1/1 Running 0 10d

apollo-portal-67874d8f74-k8dxn 1/1 Running 0 27d

dubbo-monitor-78695bb6f7-bb74p 1/1 Running 0 7d3h

grafana-7bf4969df6-nktl9 1/1 Running 0 20d

jenkins-5fc5fc5cc-pnnzd 1/1 Running 0 15d

kafka-manager-5b566f8cf8-fntfr 1/1 Running 0 6d1h

kibana-596775d886-tbq99 1/1 Running 0 3d22h

prometheus-986c7b784-ld5fq 1/1 Running 0 21d

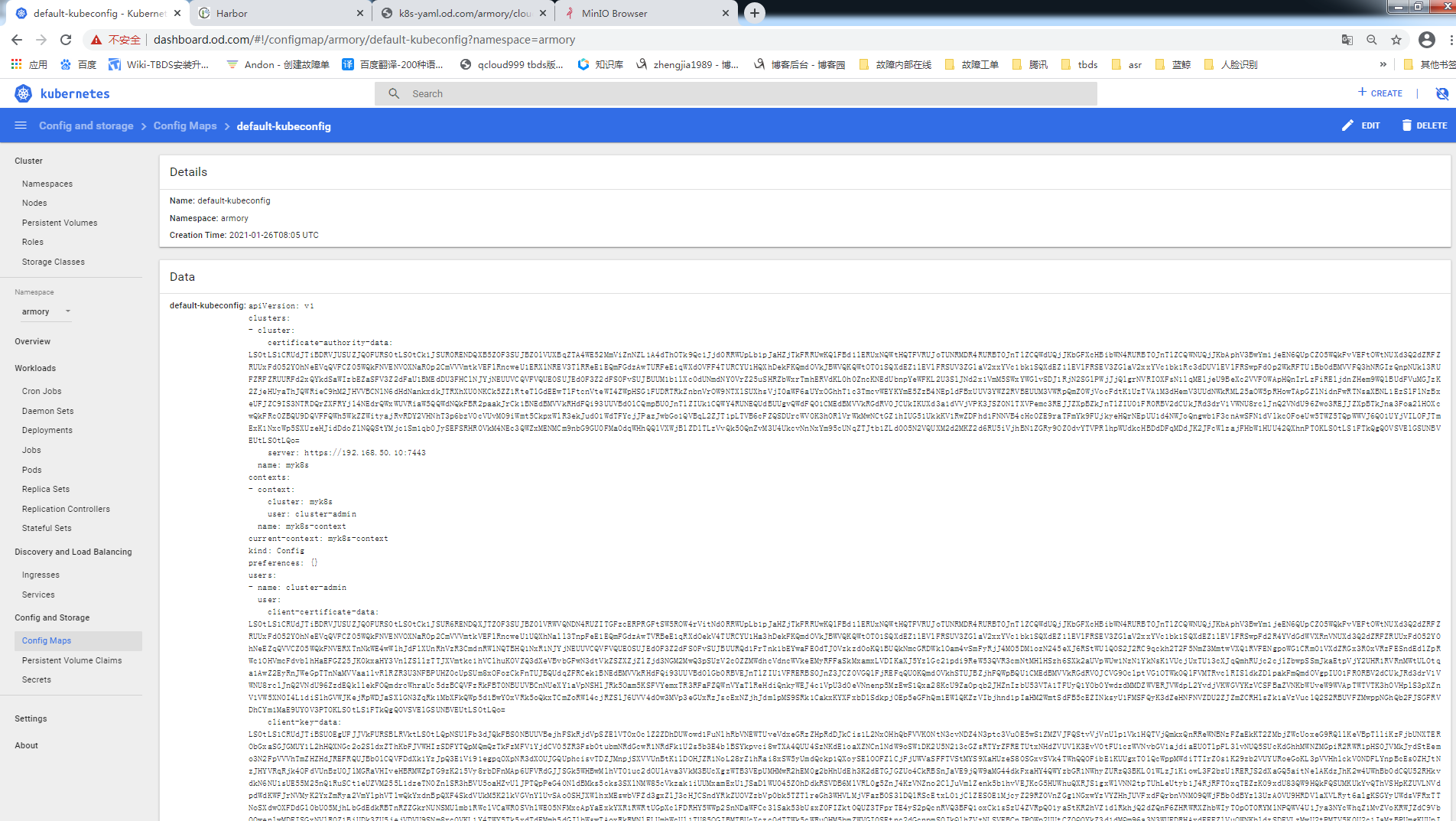

创建ConfigMap配置

[root@k8s-3 ~]# cp config default-kubeconfig

[root@k8s-3 ~]# kubectl create cm default-kubeconfig --from-file=default-kubeconfig -n armory

准备资源配置清单

[root@k8s-5 ~]# cd /data/k8s-yaml/armory/clouddriver/

[root@k8s-5 clouddriver]# vim init-env.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: init-env

namespace: armory

data:

API_HOST: http://spinnaker.od.com/api

ARMORY_ID: c02f0781-92f5-4e80-86db-0ba8fe7b8544

ARMORYSPINNAKER_CONF_STORE_BUCKET: armory-platform

ARMORYSPINNAKER_CONF_STORE_PREFIX: front50

ARMORYSPINNAKER_GCS_ENABLED: "false"

ARMORYSPINNAKER_S3_ENABLED: "true"

AUTH_ENABLED: "false"

AWS_REGION: us-east-1

BASE_IP: 127.0.0.1

CLOUDDRIVER_OPTS: -Dspring.profiles.active=armory,configurator,local

CONFIGURATOR_ENABLED: "false"

DECK_HOST: http://spinnaker.od.com

ECHO_OPTS: -Dspring.profiles.active=armory,configurator,local

GATE_OPTS: -Dspring.profiles.active=armory,configurator,local

IGOR_OPTS: -Dspring.profiles.active=armory,configurator,local

PLATFORM_ARCHITECTURE: k8s

REDIS_HOST: redis://redis:6379

SERVER_ADDRESS: 0.0.0.0

SPINNAKER_AWS_DEFAULT_REGION: us-east-1

SPINNAKER_AWS_ENABLED: "false"

SPINNAKER_CONFIG_DIR: /home/spinnaker/config

SPINNAKER_GOOGLE_PROJECT_CREDENTIALS_PATH: ""

SPINNAKER_HOME: /home/spinnaker

SPRING_PROFILES_ACTIVE: armory,configurator,local

[root@k8s-5 clouddriver]# vim custom-configmap.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: custom-config

namespace: armory

data:

clouddriver-local.yml: |

kubernetes:

enabled: true

accounts:

- name: cluster-admin

serviceAccount: false

dockerRegistries:

- accountName: harbor

namespace: []

namespaces:

- test

- prod

kubeconfigFile: /opt/spinnaker/credentials/custom/default-kubeconfig

primaryAccount: cluster-admin

dockerRegistry:

enabled: true

accounts:

- name: harbor

requiredGroupMembership: []

providerVersion: V1

insecureRegistry: true

address: http://harbor.od.com

username: admin

password: Harbor12345

primaryAccount: harbor

artifacts:

s3:

enabled: true

accounts:

- name: armory-config-s3-account

apiEndpoint: http://minio

apiRegion: us-east-1

gcs:

enabled: false

accounts:

- name: armory-config-gcs-account

custom-config.json: ""

echo-configurator.yml: |

diagnostics:

enabled: true

front50-local.yml: |

spinnaker:

s3:

endpoint: http://minio

igor-local.yml: |

jenkins:

enabled: true

masters:

- name: jenkins-admin

address: http://jenkins.od.com

username: admin

password: admin123

primaryAccount: jenkins-admin

nginx.conf: |

gzip on;

gzip_types text/plain text/css application/json application/x-javascript text/xml application/xml application/xml+rss text/javascript application/vnd.ms-fontobject application/x-font-ttf font/opentype image/svg+xml image/x-icon;

server {

listen 80;

location / {

proxy_pass http://armory-deck/;

}

location /api/ {

proxy_pass http://armory-gate:8084/;

}

rewrite ^/login(.*)$ /api/login$1 last;

rewrite ^/auth(.*)$ /api/auth$1 last;

}

spinnaker-local.yml: |

services:

igor:

enabled: true

[root@k8s-5 clouddriver]# vim default-configmap.yaml

kind: ConfigMap

apiVersion: v1

metadata:

name: default-config

namespace: armory

data:

barometer.yml: |

server:

port: 9092

spinnaker:

redis:

host: ${services.redis.host}

port: ${services.redis.port}

clouddriver-armory.yml: |

aws:

defaultAssumeRole: role/${SPINNAKER_AWS_DEFAULT_ASSUME_ROLE:SpinnakerManagedProfile}

accounts:

- name: default-aws-account

accountId: ${SPINNAKER_AWS_DEFAULT_ACCOUNT_ID:none}

client:

maxErrorRetry: 20

serviceLimits:

cloudProviderOverrides:

aws:

rateLimit: 15.0

implementationLimits:

AmazonAutoScaling:

defaults:

rateLimit: 3.0

AmazonElasticLoadBalancing:

defaults:

rateLimit: 5.0

security.basic.enabled: false

management.security.enabled: false

clouddriver-dev.yml: |

serviceLimits:

defaults:

rateLimit: 2

clouddriver.yml: |

server:

port: ${services.clouddriver.port:7002}

address: ${services.clouddriver.host:localhost}

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

udf:

enabled: ${services.clouddriver.aws.udf.enabled:true}

udfRoot: /opt/spinnaker/config/udf

defaultLegacyUdf: false

default:

account:

env: ${providers.aws.primaryCredentials.name}

aws:

enabled: ${providers.aws.enabled:false}

defaults:

iamRole: ${providers.aws.defaultIAMRole:BaseIAMRole}

defaultRegions:

- name: ${providers.aws.defaultRegion:us-east-1}

defaultFront50Template: ${services.front50.baseUrl}

defaultKeyPairTemplate: ${providers.aws.defaultKeyPairTemplate}

azure:

enabled: ${providers.azure.enabled:false}

accounts:

- name: ${providers.azure.primaryCredentials.name}

clientId: ${providers.azure.primaryCredentials.clientId}

appKey: ${providers.azure.primaryCredentials.appKey}

tenantId: ${providers.azure.primaryCredentials.tenantId}

subscriptionId: ${providers.azure.primaryCredentials.subscriptionId}

google:

enabled: ${providers.google.enabled:false}

accounts:

- name: ${providers.google.primaryCredentials.name}

project: ${providers.google.primaryCredentials.project}

jsonPath: ${providers.google.primaryCredentials.jsonPath}

consul:

enabled: ${providers.google.primaryCredentials.consul.enabled:false}

cf:

enabled: ${providers.cf.enabled:false}

accounts:

- name: ${providers.cf.primaryCredentials.name}

api: ${providers.cf.primaryCredentials.api}

console: ${providers.cf.primaryCredentials.console}

org: ${providers.cf.defaultOrg}

space: ${providers.cf.defaultSpace}

username: ${providers.cf.account.name:}

password: ${providers.cf.account.password:}

kubernetes:

enabled: ${providers.kubernetes.enabled:false}

accounts:

- name: ${providers.kubernetes.primaryCredentials.name}

dockerRegistries:

- accountName: ${providers.kubernetes.primaryCredentials.dockerRegistryAccount}

openstack:

enabled: ${providers.openstack.enabled:false}

accounts:

- name: ${providers.openstack.primaryCredentials.name}

authUrl: ${providers.openstack.primaryCredentials.authUrl}

username: ${providers.openstack.primaryCredentials.username}

password: ${providers.openstack.primaryCredentials.password}

projectName: ${providers.openstack.primaryCredentials.projectName}

domainName: ${providers.openstack.primaryCredentials.domainName:Default}

regions: ${providers.openstack.primaryCredentials.regions}

insecure: ${providers.openstack.primaryCredentials.insecure:false}

userDataFile: ${providers.openstack.primaryCredentials.userDataFile:}

lbaas:

pollTimeout: 60

pollInterval: 5

dockerRegistry:

enabled: ${providers.dockerRegistry.enabled:false}

accounts:

- name: ${providers.dockerRegistry.primaryCredentials.name}

address: ${providers.dockerRegistry.primaryCredentials.address}

username: ${providers.dockerRegistry.primaryCredentials.username:}

passwordFile: ${providers.dockerRegistry.primaryCredentials.passwordFile}

credentials:

primaryAccountTypes: ${providers.aws.primaryCredentials.name}, ${providers.google.primaryCredentials.name}, ${providers.cf.primaryCredentials.name}, ${providers.azure.primaryCredentials.name}

challengeDestructiveActionsEnvironments: ${providers.aws.primaryCredentials.name}, ${providers.google.primaryCredentials.name}, ${providers.cf.primaryCredentials.name}, ${providers.azure.primaryCredentials.name}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: controller.invocations

labels:

- account

- region

dinghy.yml: ""

echo-armory.yml: |

diagnostics:

enabled: true

id: ${ARMORY_ID:unknown}

armorywebhooks:

enabled: false

forwarding:

baseUrl: http://armory-dinghy:8081

endpoint: v1/webhooks

echo-noncron.yml: |

scheduler:

enabled: false

echo.yml: |

server:

port: ${services.echo.port:8089}

address: ${services.echo.host:localhost}

cassandra:

enabled: ${services.echo.cassandra.enabled:false}

embedded: ${services.cassandra.embedded:false}

host: ${services.cassandra.host:localhost}

spinnaker:

baseUrl: ${services.deck.baseUrl}

cassandra:

enabled: ${services.echo.cassandra.enabled:false}

inMemory:

enabled: ${services.echo.inMemory.enabled:true}

front50:

baseUrl: ${services.front50.baseUrl:http://localhost:8080 }

orca:

baseUrl: ${services.orca.baseUrl:http://localhost:8083 }

endpoints.health.sensitive: false

slack:

enabled: ${services.echo.notifications.slack.enabled:false}

token: ${services.echo.notifications.slack.token}

spring:

mail:

host: ${mail.host}

mail:

enabled: ${services.echo.notifications.mail.enabled:false}

host: ${services.echo.notifications.mail.host}

from: ${services.echo.notifications.mail.fromAddress}

hipchat:

enabled: ${services.echo.notifications.hipchat.enabled:false}

baseUrl: ${services.echo.notifications.hipchat.url}

token: ${services.echo.notifications.hipchat.token}

twilio:

enabled: ${services.echo.notifications.sms.enabled:false}

baseUrl: ${services.echo.notifications.sms.url:https://api.twilio.com/ }

account: ${services.echo.notifications.sms.account}

token: ${services.echo.notifications.sms.token}

from: ${services.echo.notifications.sms.from}

scheduler:

enabled: ${services.echo.cron.enabled:true}

threadPoolSize: 20

triggeringEnabled: true

pipelineConfigsPoller:

enabled: true

pollingIntervalMs: 30000

cron:

timezone: ${services.echo.cron.timezone}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

webhooks:

artifacts:

enabled: true

fetch.sh: |+

CONFIG_LOCATION=${SPINNAKER_HOME:-"/opt/spinnaker"}/config

CONTAINER=$1

rm -f /opt/spinnaker/config/*.yml

mkdir -p ${CONFIG_LOCATION}

for filename in /opt/spinnaker/config/default/*.yml; do

cp $filename ${CONFIG_LOCATION}

done

if [ -d /opt/spinnaker/config/custom ]; then

for filename in /opt/spinnaker/config/custom/*; do

cp $filename ${CONFIG_LOCATION}

done

fi

add_ca_certs() {

ca_cert_path="$1"

jks_path="$2"

alias="$3"

if [[ "$(whoami)" != "root" ]]; then

echo "INFO: I do not have proper permisions to add CA roots"

return

fi

if [[ ! -f ${ca_cert_path} ]]; then

echo "INFO: No CA cert found at ${ca_cert_path}"

return

fi

keytool -importcert \

-file ${ca_cert_path} \

-keystore ${jks_path} \

-alias ${alias} \

-storepass changeit \

-noprompt

}

if [ `which keytool` ]; then

echo "INFO: Keytool found adding certs where appropriate"

add_ca_certs "${CONFIG_LOCATION}/ca.crt" "/etc/ssl/certs/java/cacerts" "custom-ca"

else

echo "INFO: Keytool not found, not adding any certs/private keys"

fi

saml_pem_path="/opt/spinnaker/config/custom/saml.pem"

saml_pkcs12_path="/tmp/saml.pkcs12"

saml_jks_path="${CONFIG_LOCATION}/saml.jks"

x509_ca_cert_path="/opt/spinnaker/config/custom/x509ca.crt"

x509_client_cert_path="/opt/spinnaker/config/custom/x509client.crt"

x509_jks_path="${CONFIG_LOCATION}/x509.jks"

x509_nginx_cert_path="/opt/nginx/certs/ssl.crt"

if [ "${CONTAINER}" == "gate" ]; then

if [ -f ${saml_pem_path} ]; then

echo "Loading ${saml_pem_path} into ${saml_jks_path}"

openssl pkcs12 -export -out ${saml_pkcs12_path} -in ${saml_pem_path} -password pass:changeit -name saml

keytool -genkey -v -keystore ${saml_jks_path} -alias saml \

-keyalg RSA -keysize 2048 -validity 10000 \

-storepass changeit -keypass changeit -dname "CN=armory"

keytool -importkeystore \

-srckeystore ${saml_pkcs12_path} \

-srcstoretype PKCS12 \

-srcstorepass changeit \

-destkeystore ${saml_jks_path} \

-deststoretype JKS \

-storepass changeit \

-alias saml \

-destalias saml \

-noprompt

else

echo "No SAML IDP pemfile found at ${saml_pem_path}"

fi

if [ -f ${x509_ca_cert_path} ]; then

echo "Loading ${x509_ca_cert_path} into ${x509_jks_path}"

add_ca_certs ${x509_ca_cert_path} ${x509_jks_path} "ca"

else

echo "No x509 CA cert found at ${x509_ca_cert_path}"

fi

if [ -f ${x509_client_cert_path} ]; then

echo "Loading ${x509_client_cert_path} into ${x509_jks_path}"

add_ca_certs ${x509_client_cert_path} ${x509_jks_path} "client"

else

echo "No x509 Client cert found at ${x509_client_cert_path}"

fi

if [ -f ${x509_nginx_cert_path} ]; then

echo "Creating a self-signed CA (EXPIRES IN 360 DAYS) with java keystore: ${x509_jks_path}"

echo -e "\n\n\n\n\n\ny\n" | keytool -genkey -keyalg RSA -alias server -keystore keystore.jks -storepass changeit -validity 360 -keysize 2048

keytool -importkeystore \

-srckeystore keystore.jks \

-srcstorepass changeit \

-destkeystore "${x509_jks_path}" \

-storepass changeit \

-srcalias server \

-destalias server \

-noprompt

else

echo "No x509 nginx cert found at ${x509_nginx_cert_path}"

fi

fi

if [ "${CONTAINER}" == "nginx" ]; then

nginx_conf_path="/opt/spinnaker/config/default/nginx.conf"

if [ -f ${nginx_conf_path} ]; then

cp ${nginx_conf_path} /etc/nginx/nginx.conf

fi

fi

fiat.yml: |-

server:

port: ${services.fiat.port:7003}

address: ${services.fiat.host:localhost}

redis:

connection: ${services.redis.connection:redis://localhost:6379}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

hystrix:

command:

default.execution.isolation.thread.timeoutInMilliseconds: 20000

logging:

level:

com.netflix.spinnaker.fiat: DEBUG

front50-armory.yml: |

spinnaker:

redis:

enabled: true

host: redis

front50.yml: |

server:

port: ${services.front50.port:8080}

address: ${services.front50.host:localhost}

hystrix:

command:

default.execution.isolation.thread.timeoutInMilliseconds: 15000

cassandra:

enabled: ${services.front50.cassandra.enabled:false}

embedded: ${services.cassandra.embedded:false}

host: ${services.cassandra.host:localhost}

aws:

simpleDBEnabled: ${providers.aws.simpleDBEnabled:false}

defaultSimpleDBDomain: ${providers.aws.defaultSimpleDBDomain}

spinnaker:

cassandra:

enabled: ${services.front50.cassandra.enabled:false}

host: ${services.cassandra.host:localhost}

port: ${services.cassandra.port:9042}

cluster: ${services.cassandra.cluster:CASS_SPINNAKER}

keyspace: front50

name: global

redis:

enabled: ${services.front50.redis.enabled:false}

gcs:

enabled: ${services.front50.gcs.enabled:false}

bucket: ${services.front50.storage_bucket:}

bucketLocation: ${services.front50.bucket_location:}

rootFolder: ${services.front50.rootFolder:front50}

project: ${providers.google.primaryCredentials.project}

jsonPath: ${providers.google.primaryCredentials.jsonPath}

s3:

enabled: ${services.front50.s3.enabled:false}

bucket: ${services.front50.storage_bucket:}

rootFolder: ${services.front50.rootFolder:front50}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: controller.invocations

labels:

- application

- cause

- name: aws.request.httpRequestTime

labels:

- status

- exception

- AWSErrorCode

- name: aws.request.requestSigningTime

labels:

- exception

gate-armory.yml: |+

lighthouse:

baseUrl: http://${DEFAULT_DNS_NAME:lighthouse}:5000

gate.yml: |

server:

port: ${services.gate.port:8084}

address: ${services.gate.host:localhost}

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

configuration:

secure: true

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: EurekaOkClient_Request

labels:

- cause

- reason

- status

igor-nonpolling.yml: |

jenkins:

polling:

enabled: false

igor.yml: |

server:

port: ${services.igor.port:8088}

address: ${services.igor.host:localhost}

jenkins:

enabled: ${services.jenkins.enabled:false}

masters:

- name: ${services.jenkins.defaultMaster.name}

address: ${services.jenkins.defaultMaster.baseUrl}

username: ${services.jenkins.defaultMaster.username}

password: ${services.jenkins.defaultMaster.password}

csrf: ${services.jenkins.defaultMaster.csrf:false}

travis:

enabled: ${services.travis.enabled:false}

masters:

- name: ${services.travis.defaultMaster.name}

baseUrl: ${services.travis.defaultMaster.baseUrl}

address: ${services.travis.defaultMaster.address}

githubToken: ${services.travis.defaultMaster.githubToken}

dockerRegistry:

enabled: ${providers.dockerRegistry.enabled:false}

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: controller.invocations

labels:

- master

kayenta-armory.yml: |

kayenta:

aws:

enabled: ${ARMORYSPINNAKER_S3_ENABLED:false}

accounts:

- name: aws-s3-storage

bucket: ${ARMORYSPINNAKER_CONF_STORE_BUCKET}

rootFolder: kayenta

supportedTypes:

- OBJECT_STORE

- CONFIGURATION_STORE

s3:

enabled: ${ARMORYSPINNAKER_S3_ENABLED:false}

google:

enabled: ${ARMORYSPINNAKER_GCS_ENABLED:false}

accounts:

- name: cloud-armory

bucket: ${ARMORYSPINNAKER_CONF_STORE_BUCKET}

rootFolder: kayenta-prod

supportedTypes:

- METRICS_STORE

- OBJECT_STORE

- CONFIGURATION_STORE

gcs:

enabled: ${ARMORYSPINNAKER_GCS_ENABLED:false}

kayenta.yml: |2

server:

port: 8090

kayenta:

atlas:

enabled: false

google:

enabled: false

aws:

enabled: false

datadog:

enabled: false

prometheus:

enabled: false

gcs:

enabled: false

s3:

enabled: false

stackdriver:

enabled: false

memory:

enabled: false

configbin:

enabled: false

keiko:

queue:

redis:

queueName: kayenta.keiko.queue

deadLetterQueueName: kayenta.keiko.queue.deadLetters

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: true

swagger:

enabled: true

title: Kayenta API

description:

contact:

patterns:

- /admin.*

- /canary.*

- /canaryConfig.*

- /canaryJudgeResult.*

- /credentials.*

- /fetch.*

- /health

- /judges.*

- /metadata.*

- /metricSetList.*

- /metricSetPairList.*

- /pipeline.*

security.basic.enabled: false

management.security.enabled: false

nginx.conf: |

user nginx;

worker_processes 1;

error_log /var/log/nginx/error.log warn;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;

sendfile on;

keepalive_timeout 65;

include /etc/nginx/conf.d/*.conf;

}

stream {

upstream gate_api {

server armory-gate:8085;

}

server {

listen 8085;

proxy_pass gate_api;

}

}

nginx.http.conf: |

gzip on;

gzip_types text/plain text/css application/json application/x-javascript text/xml application/xml application/xml+rss text/javascript application/vnd.ms-fontobject application/x-font-ttf font/opentype image/svg+xml image/x-icon;

server {

listen 80;

listen [::]:80;

location / {

proxy_pass http://armory-deck/;

}

location /api/ {

proxy_pass http://armory-gate:8084/;

}

location /slack/ {

proxy_pass http://armory-platform:10000/;

}

rewrite ^/login(.*)$ /api/login$1 last;

rewrite ^/auth(.*)$ /api/auth$1 last;

}

nginx.https.conf: |

gzip on;

gzip_types text/plain text/css application/json application/x-javascript text/xml application/xml application/xml+rss text/javascript application/vnd.ms-fontobject application/x-font-ttf font/opentype image/svg+xml image/x-icon;

server {

listen 80;

listen [::]:80;

return 301 https://$host$request_uri;

}

server {

listen 443 ssl;

listen [::]:443 ssl;

ssl on;

ssl_certificate /opt/nginx/certs/ssl.crt;

ssl_certificate_key /opt/nginx/certs/ssl.key;

location / {

proxy_pass http://armory-deck/;

}

location /api/ {

proxy_pass http://armory-gate:8084/;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $proxy_protocol_addr;

proxy_set_header X-Forwarded-For $proxy_protocol_addr;

proxy_set_header X-Forwarded-Proto $scheme;

}

location /slack/ {

proxy_pass http://armory-platform:10000/;

}

rewrite ^/login(.*)$ /api/login$1 last;

rewrite ^/auth(.*)$ /api/auth$1 last;

}

orca-armory.yml: |

mine:

baseUrl: http://${services.barometer.host}:${services.barometer.port}

pipelineTemplate:

enabled: ${features.pipelineTemplates.enabled:false}

jinja:

enabled: true

kayenta:

enabled: ${services.kayenta.enabled:false}

baseUrl: ${services.kayenta.baseUrl}

jira:

enabled: ${features.jira.enabled:false}

basicAuth: "Basic ${features.jira.basicAuthToken}"

url: ${features.jira.createIssueUrl}

webhook:

preconfigured:

- label: Enforce Pipeline Policy

description: Checks pipeline configuration against policy requirements

type: enforcePipelinePolicy

enabled: ${features.certifiedPipelines.enabled:false}

url: "http://lighthouse:5000/v1/pipelines/${execution.application}/${execution.pipelineConfigId}?check_policy=yes"

headers:

Accept:

- application/json

method: GET

waitForCompletion: true

statusUrlResolution: getMethod

statusJsonPath: $.status

successStatuses: pass

canceledStatuses:

terminalStatuses: TERMINAL

- label: "Jira: Create Issue"

description: Enter a Jira ticket when this pipeline runs

type: createJiraIssue

enabled: ${jira.enabled}

url: ${jira.url}

customHeaders:

"Content-Type": application/json

Authorization: ${jira.basicAuth}

method: POST

parameters:

- name: summary

label: Issue Summary

description: A short summary of your issue.

- name: description

label: Issue Description

description: A longer description of your issue.

- name: projectKey

label: Project key

description: The key of your JIRA project.

- name: type

label: Issue Type

description: The type of your issue, e.g. "Task", "Story", etc.

payload: |

{

"fields" : {

"description": "${parameterValues['description']}",

"issuetype": {

"name": "${parameterValues['type']}"

},

"project": {

"key": "${parameterValues['projectKey']}"

},

"summary": "${parameterValues['summary']}"

}

}

waitForCompletion: false

- label: "Jira: Update Issue"

description: Update a previously created Jira Issue

type: updateJiraIssue

enabled: ${jira.enabled}

url: "${execution.stages.?[type == 'createJiraIssue'][0]['context']['buildInfo']['self']}"

customHeaders:

"Content-Type": application/json

Authorization: ${jira.basicAuth}

method: PUT

parameters:

- name: summary

label: Issue Summary

description: A short summary of your issue.

- name: description

label: Issue Description

description: A longer description of your issue.

payload: |

{

"fields" : {

"description": "${parameterValues['description']}",

"summary": "${parameterValues['summary']}"

}

}

waitForCompletion: false

- label: "Jira: Transition Issue"

description: Change state of existing Jira Issue

type: transitionJiraIssue

enabled: ${jira.enabled}

url: "${execution.stages.?[type == 'createJiraIssue'][0]['context']['buildInfo']['self']}/transitions"

customHeaders:

"Content-Type": application/json

Authorization: ${jira.basicAuth}

method: POST

parameters:

- name: newStateID

label: New State ID

description: The ID of the state you want to transition the issue to.

payload: |

{

"transition" : {

"id" : "${parameterValues['newStateID']}"

}

}

waitForCompletion: false

- label: "Jira: Add Comment"

description: Add a comment to an existing Jira Issue

type: commentJiraIssue

enabled: ${jira.enabled}

url: "${execution.stages.?[type == 'createJiraIssue'][0]['context']['buildInfo']['self']}/comment"

customHeaders:

"Content-Type": application/json

Authorization: ${jira.basicAuth}

method: POST

parameters:

- name: body

label: Comment body

description: The text body of the component.

payload: |

{

"body" : "${parameterValues['body']}"

}

waitForCompletion: false

orca.yml: |

server:

port: ${services.orca.port:8083}

address: ${services.orca.host:localhost}

oort:

baseUrl: ${services.oort.baseUrl:localhost:7002}

front50:

baseUrl: ${services.front50.baseUrl:localhost:8080}

mort:

baseUrl: ${services.mort.baseUrl:localhost:7002}

kato:

baseUrl: ${services.kato.baseUrl:localhost:7002}

bakery:

baseUrl: ${services.bakery.baseUrl:localhost:8087}

extractBuildDetails: ${services.bakery.extractBuildDetails:true}

allowMissingPackageInstallation: ${services.bakery.allowMissingPackageInstallation:true}

echo:

enabled: ${services.echo.enabled:false}

baseUrl: ${services.echo.baseUrl:8089}

igor:

baseUrl: ${services.igor.baseUrl:8088}

flex:

baseUrl: http://not-a-host

default:

bake:

account: ${providers.aws.primaryCredentials.name}

securityGroups:

vpc:

securityGroups:

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

tasks:

executionWindow:

timezone: ${services.orca.timezone}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: controller.invocations

labels:

- application

rosco-armory.yml: |

redis:

timeout: 50000

rosco:

jobs:

local:

timeoutMinutes: 60

rosco.yml: |

server:

port: ${services.rosco.port:8087}

address: ${services.rosco.host:localhost}

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

aws:

enabled: ${providers.aws.enabled:false}

docker:

enabled: ${services.docker.enabled:false}

bakeryDefaults:

targetRepository: ${services.docker.targetRepository}

google:

enabled: ${providers.google.enabled:false}

accounts:

- name: ${providers.google.primaryCredentials.name}

project: ${providers.google.primaryCredentials.project}

jsonPath: ${providers.google.primaryCredentials.jsonPath}

gce:

bakeryDefaults:

zone: ${providers.google.defaultZone}

rosco:

configDir: ${services.rosco.configDir}

jobs:

local:

timeoutMinutes: 30

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: bakes

labels:

- success

spinnaker-armory.yml: |

armory:

architecture: 'k8s'

features:

artifacts:

enabled: true

pipelineTemplates:

enabled: ${PIPELINE_TEMPLATES_ENABLED:false}

infrastructureStages:

enabled: ${INFRA_ENABLED:false}

certifiedPipelines:

enabled: ${CERTIFIED_PIPELINES_ENABLED:false}

configuratorEnabled:

enabled: true

configuratorWizard:

enabled: true

configuratorCerts:

enabled: true

loadtestStage:

enabled: ${LOADTEST_ENABLED:false}

jira:

enabled: ${JIRA_ENABLED:false}

basicAuthToken: ${JIRA_BASIC_AUTH}

url: ${JIRA_URL}

login: ${JIRA_LOGIN}

password: ${JIRA_PASSWORD}

slaEnabled:

enabled: ${SLA_ENABLED:false}

chaosMonkey:

enabled: ${CHAOS_ENABLED:false}

armoryPlatform:

enabled: ${PLATFORM_ENABLED:false}

uiEnabled: ${PLATFORM_UI_ENABLED:false}

services:

default:

host: ${DEFAULT_DNS_NAME:localhost}

clouddriver:

host: ${DEFAULT_DNS_NAME:armory-clouddriver}

entityTags:

enabled: false

configurator:

baseUrl: http://${CONFIGURATOR_HOST:armory-configurator}:8069

echo:

host: ${DEFAULT_DNS_NAME:armory-echo}

deck:

gateUrl: ${API_HOST:service.default.host}

baseUrl: ${DECK_HOST:armory-deck}

dinghy:

enabled: ${DINGHY_ENABLED:false}

host: ${DEFAULT_DNS_NAME:armory-dinghy}

baseUrl: ${services.default.protocol}://${services.dinghy.host}:${services.dinghy.port}

port: 8081

front50:

host: ${DEFAULT_DNS_NAME:armory-front50}

cassandra:

enabled: false

redis:

enabled: true

gcs:

enabled: ${ARMORYSPINNAKER_GCS_ENABLED:false}

s3:

enabled: ${ARMORYSPINNAKER_S3_ENABLED:false}

storage_bucket: ${ARMORYSPINNAKER_CONF_STORE_BUCKET}

rootFolder: ${ARMORYSPINNAKER_CONF_STORE_PREFIX:front50}

gate:

host: ${DEFAULT_DNS_NAME:armory-gate}

igor:

host: ${DEFAULT_DNS_NAME:armory-igor}

kayenta:

enabled: true

host: ${DEFAULT_DNS_NAME:armory-kayenta}

canaryConfigStore: true

port: 8090

baseUrl: ${services.default.protocol}://${services.kayenta.host}:${services.kayenta.port}

metricsStore: ${METRICS_STORE:stackdriver}

metricsAccountName: ${METRICS_ACCOUNT_NAME}

storageAccountName: ${STORAGE_ACCOUNT_NAME}

atlasWebComponentsUrl: ${ATLAS_COMPONENTS_URL:}

lighthouse:

host: ${DEFAULT_DNS_NAME:armory-lighthouse}

port: 5000

baseUrl: ${services.default.protocol}://${services.lighthouse.host}:${services.lighthouse.port}

orca:

host: ${DEFAULT_DNS_NAME:armory-orca}

platform:

enabled: ${PLATFORM_ENABLED:false}

host: ${DEFAULT_DNS_NAME:armory-platform}

baseUrl: ${services.default.protocol}://${services.platform.host}:${services.platform.port}

port: 5001

rosco:

host: ${DEFAULT_DNS_NAME:armory-rosco}

enabled: true

configDir: /opt/spinnaker/config/packer

bakery:

allowMissingPackageInstallation: true

barometer:

enabled: ${BAROMETER_ENABLED:false}

host: ${DEFAULT_DNS_NAME:armory-barometer}

baseUrl: ${services.default.protocol}://${services.barometer.host}:${services.barometer.port}

port: 9092

newRelicEnabled: ${NEW_RELIC_ENABLED:false}

redis:

host: redis

port: 6379

connection: ${REDIS_HOST:redis://localhost:6379}

fiat:

enabled: ${FIAT_ENABLED:false}

host: ${DEFAULT_DNS_NAME:armory-fiat}

port: 7003

baseUrl: ${services.default.protocol}://${services.fiat.host}:${services.fiat.port}

providers:

aws:

enabled: ${SPINNAKER_AWS_ENABLED:true}

defaultRegion: ${SPINNAKER_AWS_DEFAULT_REGION:us-west-2}

defaultIAMRole: ${SPINNAKER_AWS_DEFAULT_IAM_ROLE:SpinnakerInstanceProfile}

defaultAssumeRole: ${SPINNAKER_AWS_DEFAULT_ASSUME_ROLE:SpinnakerManagedProfile}

primaryCredentials:

name: ${SPINNAKER_AWS_DEFAULT_ACCOUNT:default-aws-account}

kubernetes:

proxy: localhost:8001

apiPrefix: api/v1/proxy/namespaces/kube-system/services/kubernetes-dashboard/#

spinnaker.yml: |2

global:

spinnaker:

timezone: 'America/Los_Angeles'

architecture: ${PLATFORM_ARCHITECTURE}

services:

default:

host: localhost

protocol: http

clouddriver:

host: ${services.default.host}

port: 7002

baseUrl: ${services.default.protocol}://${services.clouddriver.host}:${services.clouddriver.port}

aws:

udf:

enabled: true

echo:

enabled: true

host: ${services.default.host}

port: 8089

baseUrl: ${services.default.protocol}://${services.echo.host}:${services.echo.port}

cassandra:

enabled: false

inMemory:

enabled: true

cron:

enabled: true

timezone: ${global.spinnaker.timezone}

notifications:

mail:

enabled: false

host: # the smtp host

fromAddress: # the address for which emails are sent from

hipchat:

enabled: false

url: # the hipchat server to connect to

token: # the hipchat auth token

botName: # the username of the bot

sms:

enabled: false

account: # twilio account id

token: # twilio auth token

from: # phone number by which sms messages are sent

slack:

enabled: false

token: # the API token for the bot

botName: # the username of the bot

deck:

host: ${services.default.host}

port: 9000

baseUrl: ${services.default.protocol}://${services.deck.host}:${services.deck.port}

gateUrl: ${API_HOST:services.gate.baseUrl}

bakeryUrl: ${services.bakery.baseUrl}

timezone: ${global.spinnaker.timezone}

auth:

enabled: ${AUTH_ENABLED:false}

fiat:

enabled: false

host: ${services.default.host}

port: 7003

baseUrl: ${services.default.protocol}://${services.fiat.host}:${services.fiat.port}

front50:

host: ${services.default.host}

port: 8080

baseUrl: ${services.default.protocol}://${services.front50.host}:${services.front50.port}

storage_bucket: ${SPINNAKER_DEFAULT_STORAGE_BUCKET:}

bucket_location:

bucket_root: front50

cassandra:

enabled: false

redis:

enabled: false

gcs:

enabled: false

s3:

enabled: false

gate:

host: ${services.default.host}

port: 8084

baseUrl: ${services.default.protocol}://${services.gate.host}:${services.gate.port}

igor:

enabled: false

host: ${services.default.host}

port: 8088

baseUrl: ${services.default.protocol}://${services.igor.host}:${services.igor.port}

kato:

host: ${services.clouddriver.host}

port: ${services.clouddriver.port}

baseUrl: ${services.clouddriver.baseUrl}

mort:

host: ${services.clouddriver.host}

port: ${services.clouddriver.port}

baseUrl: ${services.clouddriver.baseUrl}

orca:

host: ${services.default.host}

port: 8083

baseUrl: ${services.default.protocol}://${services.orca.host}:${services.orca.port}

timezone: ${global.spinnaker.timezone}

enabled: true

oort:

host: ${services.clouddriver.host}

port: ${services.clouddriver.port}

baseUrl: ${services.clouddriver.baseUrl}

rosco:

host: ${services.default.host}

port: 8087

baseUrl: ${services.default.protocol}://${services.rosco.host}:${services.rosco.port}

configDir: /opt/rosco/config/packer

bakery:

host: ${services.rosco.host}

port: ${services.rosco.port}

baseUrl: ${services.rosco.baseUrl}

extractBuildDetails: true

allowMissingPackageInstallation: false

docker:

targetRepository: # Optional, but expected in spinnaker-local.yml if specified.

jenkins:

enabled: ${services.igor.enabled:false}

defaultMaster:

name: Jenkins

baseUrl: # Expected in spinnaker-local.yml

username: # Expected in spinnaker-local.yml

password: # Expected in spinnaker-local.yml

redis:

host: redis

port: 6379

connection: ${REDIS_HOST:redis://localhost:6379}

cassandra:

host: ${services.default.host}

port: 9042

embedded: false

cluster: CASS_SPINNAKER

travis:

enabled: false

defaultMaster:

name: ci # The display name for this server. Gets prefixed with "travis-"

baseUrl: https://travis-ci.com

address: https://api.travis-ci.org

githubToken: # GitHub scopes currently required by Travis is required.

spectator:

webEndpoint:

enabled: false

stackdriver:

enabled: ${SPINNAKER_STACKDRIVER_ENABLED:false}

projectName: ${SPINNAKER_STACKDRIVER_PROJECT_NAME:${providers.google.primaryCredentials.project}}

credentialsPath: ${SPINNAKER_STACKDRIVER_CREDENTIALS_PATH:${providers.google.primaryCredentials.jsonPath}}

providers:

aws:

enabled: ${SPINNAKER_AWS_ENABLED:false}

simpleDBEnabled: false

defaultRegion: ${SPINNAKER_AWS_DEFAULT_REGION:us-west-2}

defaultIAMRole: BaseIAMRole

defaultSimpleDBDomain: CLOUD_APPLICATIONS

primaryCredentials:

name: default

defaultKeyPairTemplate: "{{name}}-keypair"

google:

enabled: ${SPINNAKER_GOOGLE_ENABLED:false}

defaultRegion: ${SPINNAKER_GOOGLE_DEFAULT_REGION:us-central1}

defaultZone: ${SPINNAKER_GOOGLE_DEFAULT_ZONE:us-central1-f}

primaryCredentials:

name: my-account-name

project: ${SPINNAKER_GOOGLE_PROJECT_ID:}

jsonPath: ${SPINNAKER_GOOGLE_PROJECT_CREDENTIALS_PATH:}

consul:

enabled: ${SPINNAKER_GOOGLE_CONSUL_ENABLED:false}

cf:

enabled: false

defaultOrg: spinnaker-cf-org

defaultSpace: spinnaker-cf-space

primaryCredentials:

name: my-cf-account

api: my-cf-api-uri

console: my-cf-console-base-url

azure:

enabled: ${SPINNAKER_AZURE_ENABLED:false}

defaultRegion: ${SPINNAKER_AZURE_DEFAULT_REGION:westus}

primaryCredentials:

name: my-azure-account

clientId:

appKey:

tenantId:

subscriptionId:

titan:

enabled: false

defaultRegion: us-east-1

primaryCredentials:

name: my-titan-account

kubernetes:

enabled: ${SPINNAKER_KUBERNETES_ENABLED:false}

primaryCredentials:

name: my-kubernetes-account

namespace: default

dockerRegistryAccount: ${providers.dockerRegistry.primaryCredentials.name}

dockerRegistry:

enabled: ${SPINNAKER_KUBERNETES_ENABLED:false}

primaryCredentials:

name: my-docker-registry-account

address: ${SPINNAKER_DOCKER_REGISTRY:https://index.docker.io/ }

repository: ${SPINNAKER_DOCKER_REPOSITORY:}

username: ${SPINNAKER_DOCKER_USERNAME:}

passwordFile: ${SPINNAKER_DOCKER_PASSWORD_FILE:}

openstack:

enabled: false

defaultRegion: ${SPINNAKER_OPENSTACK_DEFAULT_REGION:RegionOne}

primaryCredentials:

name: my-openstack-account

authUrl: ${OS_AUTH_URL}

username: ${OS_USERNAME}

password: ${OS_PASSWORD}

projectName: ${OS_PROJECT_NAME}

domainName: ${OS_USER_DOMAIN_NAME:Default}

regions: ${OS_REGION_NAME:RegionOne}

insecure: falsekind: ConfigMap

apiVersion: v1

metadata:

name: default-config

namespace: armory

data:

barometer.yml: |

server:

port: 9092

spinnaker:

redis:

host: ${services.redis.host}

port: ${services.redis.port}

clouddriver-armory.yml: |

aws:

defaultAssumeRole: role/${SPINNAKER_AWS_DEFAULT_ASSUME_ROLE:SpinnakerManagedProfile}

accounts:

- name: default-aws-account

accountId: ${SPINNAKER_AWS_DEFAULT_ACCOUNT_ID:none}

client:

maxErrorRetry: 20

serviceLimits:

cloudProviderOverrides:

aws:

rateLimit: 15.0

implementationLimits:

AmazonAutoScaling:

defaults:

rateLimit: 3.0

AmazonElasticLoadBalancing:

defaults:

rateLimit: 5.0

security.basic.enabled: false

management.security.enabled: false

clouddriver-dev.yml: |

serviceLimits:

defaults:

rateLimit: 2

clouddriver.yml: |

server:

port: ${services.clouddriver.port:7002}

address: ${services.clouddriver.host:localhost}

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

udf:

enabled: ${services.clouddriver.aws.udf.enabled:true}

udfRoot: /opt/spinnaker/config/udf

defaultLegacyUdf: false

default:

account:

env: ${providers.aws.primaryCredentials.name}

aws:

enabled: ${providers.aws.enabled:false}

defaults:

iamRole: ${providers.aws.defaultIAMRole:BaseIAMRole}

defaultRegions:

- name: ${providers.aws.defaultRegion:us-east-1}

defaultFront50Template: ${services.front50.baseUrl}

defaultKeyPairTemplate: ${providers.aws.defaultKeyPairTemplate}

azure:

enabled: ${providers.azure.enabled:false}

accounts:

- name: ${providers.azure.primaryCredentials.name}

clientId: ${providers.azure.primaryCredentials.clientId}

appKey: ${providers.azure.primaryCredentials.appKey}

tenantId: ${providers.azure.primaryCredentials.tenantId}

subscriptionId: ${providers.azure.primaryCredentials.subscriptionId}

google:

enabled: ${providers.google.enabled:false}

accounts:

- name: ${providers.google.primaryCredentials.name}

project: ${providers.google.primaryCredentials.project}

jsonPath: ${providers.google.primaryCredentials.jsonPath}

consul:

enabled: ${providers.google.primaryCredentials.consul.enabled:false}

cf:

enabled: ${providers.cf.enabled:false}

accounts:

- name: ${providers.cf.primaryCredentials.name}

api: ${providers.cf.primaryCredentials.api}

console: ${providers.cf.primaryCredentials.console}

org: ${providers.cf.defaultOrg}

space: ${providers.cf.defaultSpace}

username: ${providers.cf.account.name:}

password: ${providers.cf.account.password:}

kubernetes:

enabled: ${providers.kubernetes.enabled:false}

accounts:

- name: ${providers.kubernetes.primaryCredentials.name}

dockerRegistries:

- accountName: ${providers.kubernetes.primaryCredentials.dockerRegistryAccount}

openstack:

enabled: ${providers.openstack.enabled:false}

accounts:

- name: ${providers.openstack.primaryCredentials.name}

authUrl: ${providers.openstack.primaryCredentials.authUrl}

username: ${providers.openstack.primaryCredentials.username}

password: ${providers.openstack.primaryCredentials.password}

projectName: ${providers.openstack.primaryCredentials.projectName}

domainName: ${providers.openstack.primaryCredentials.domainName:Default}

regions: ${providers.openstack.primaryCredentials.regions}

insecure: ${providers.openstack.primaryCredentials.insecure:false}

userDataFile: ${providers.openstack.primaryCredentials.userDataFile:}

lbaas:

pollTimeout: 60

pollInterval: 5

dockerRegistry:

enabled: ${providers.dockerRegistry.enabled:false}

accounts:

- name: ${providers.dockerRegistry.primaryCredentials.name}

address: ${providers.dockerRegistry.primaryCredentials.address}

username: ${providers.dockerRegistry.primaryCredentials.username:}

passwordFile: ${providers.dockerRegistry.primaryCredentials.passwordFile}

credentials:

primaryAccountTypes: ${providers.aws.primaryCredentials.name}, ${providers.google.primaryCredentials.name}, ${providers.cf.primaryCredentials.name}, ${providers.azure.primaryCredentials.name}

challengeDestructiveActionsEnvironments: ${providers.aws.primaryCredentials.name}, ${providers.google.primaryCredentials.name}, ${providers.cf.primaryCredentials.name}, ${providers.azure.primaryCredentials.name}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: controller.invocations

labels:

- account

- region

dinghy.yml: ""

echo-armory.yml: |

diagnostics:

enabled: true

id: ${ARMORY_ID:unknown}

armorywebhooks:

enabled: false

forwarding:

baseUrl: http://armory-dinghy:8081

endpoint: v1/webhooks

echo-noncron.yml: |

scheduler:

enabled: false

echo.yml: |

server:

port: ${services.echo.port:8089}

address: ${services.echo.host:localhost}

cassandra:

enabled: ${services.echo.cassandra.enabled:false}

embedded: ${services.cassandra.embedded:false}

host: ${services.cassandra.host:localhost}

spinnaker:

baseUrl: ${services.deck.baseUrl}

cassandra:

enabled: ${services.echo.cassandra.enabled:false}

inMemory:

enabled: ${services.echo.inMemory.enabled:true}

front50:

baseUrl: ${services.front50.baseUrl:http://localhost:8080 }

orca:

baseUrl: ${services.orca.baseUrl:http://localhost:8083 }

endpoints.health.sensitive: false

slack:

enabled: ${services.echo.notifications.slack.enabled:false}

token: ${services.echo.notifications.slack.token}

spring:

mail:

host: ${mail.host}

mail:

enabled: ${services.echo.notifications.mail.enabled:false}

host: ${services.echo.notifications.mail.host}

from: ${services.echo.notifications.mail.fromAddress}

hipchat:

enabled: ${services.echo.notifications.hipchat.enabled:false}

baseUrl: ${services.echo.notifications.hipchat.url}

token: ${services.echo.notifications.hipchat.token}

twilio:

enabled: ${services.echo.notifications.sms.enabled:false}

baseUrl: ${services.echo.notifications.sms.url:https://api.twilio.com/ }

account: ${services.echo.notifications.sms.account}

token: ${services.echo.notifications.sms.token}

from: ${services.echo.notifications.sms.from}

scheduler:

enabled: ${services.echo.cron.enabled:true}

threadPoolSize: 20

triggeringEnabled: true

pipelineConfigsPoller:

enabled: true

pollingIntervalMs: 30000

cron:

timezone: ${services.echo.cron.timezone}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

webhooks:

artifacts:

enabled: true

fetch.sh: |+

CONFIG_LOCATION=${SPINNAKER_HOME:-"/opt/spinnaker"}/config

CONTAINER=$1

rm -f /opt/spinnaker/config/*.yml

mkdir -p ${CONFIG_LOCATION}

for filename in /opt/spinnaker/config/default/*.yml; do

cp $filename ${CONFIG_LOCATION}

done

if [ -d /opt/spinnaker/config/custom ]; then

for filename in /opt/spinnaker/config/custom/*; do

cp $filename ${CONFIG_LOCATION}

done

fi

add_ca_certs() {

ca_cert_path="$1"

jks_path="$2"

alias="$3"

if [[ "$(whoami)" != "root" ]]; then

echo "INFO: I do not have proper permisions to add CA roots"

return

fi

if [[ ! -f ${ca_cert_path} ]]; then

echo "INFO: No CA cert found at ${ca_cert_path}"

return

fi

keytool -importcert \

-file ${ca_cert_path} \

-keystore ${jks_path} \

-alias ${alias} \

-storepass changeit \

-noprompt

}

if [ `which keytool` ]; then

echo "INFO: Keytool found adding certs where appropriate"

add_ca_certs "${CONFIG_LOCATION}/ca.crt" "/etc/ssl/certs/java/cacerts" "custom-ca"

else

echo "INFO: Keytool not found, not adding any certs/private keys"

fi

saml_pem_path="/opt/spinnaker/config/custom/saml.pem"

saml_pkcs12_path="/tmp/saml.pkcs12"

saml_jks_path="${CONFIG_LOCATION}/saml.jks"

x509_ca_cert_path="/opt/spinnaker/config/custom/x509ca.crt"

x509_client_cert_path="/opt/spinnaker/config/custom/x509client.crt"

x509_jks_path="${CONFIG_LOCATION}/x509.jks"

x509_nginx_cert_path="/opt/nginx/certs/ssl.crt"

if [ "${CONTAINER}" == "gate" ]; then

if [ -f ${saml_pem_path} ]; then

echo "Loading ${saml_pem_path} into ${saml_jks_path}"

openssl pkcs12 -export -out ${saml_pkcs12_path} -in ${saml_pem_path} -password pass:changeit -name saml

keytool -genkey -v -keystore ${saml_jks_path} -alias saml \

-keyalg RSA -keysize 2048 -validity 10000 \

-storepass changeit -keypass changeit -dname "CN=armory"

keytool -importkeystore \

-srckeystore ${saml_pkcs12_path} \

-srcstoretype PKCS12 \

-srcstorepass changeit \

-destkeystore ${saml_jks_path} \

-deststoretype JKS \

-storepass changeit \

-alias saml \

-destalias saml \

-noprompt

else

echo "No SAML IDP pemfile found at ${saml_pem_path}"

fi

if [ -f ${x509_ca_cert_path} ]; then

echo "Loading ${x509_ca_cert_path} into ${x509_jks_path}"

add_ca_certs ${x509_ca_cert_path} ${x509_jks_path} "ca"

else

echo "No x509 CA cert found at ${x509_ca_cert_path}"

fi

if [ -f ${x509_client_cert_path} ]; then

echo "Loading ${x509_client_cert_path} into ${x509_jks_path}"

add_ca_certs ${x509_client_cert_path} ${x509_jks_path} "client"

else

echo "No x509 Client cert found at ${x509_client_cert_path}"

fi

if [ -f ${x509_nginx_cert_path} ]; then

echo "Creating a self-signed CA (EXPIRES IN 360 DAYS) with java keystore: ${x509_jks_path}"

echo -e "\n\n\n\n\n\ny\n" | keytool -genkey -keyalg RSA -alias server -keystore keystore.jks -storepass changeit -validity 360 -keysize 2048

keytool -importkeystore \

-srckeystore keystore.jks \

-srcstorepass changeit \

-destkeystore "${x509_jks_path}" \

-storepass changeit \

-srcalias server \

-destalias server \

-noprompt

else

echo "No x509 nginx cert found at ${x509_nginx_cert_path}"

fi

fi

if [ "${CONTAINER}" == "nginx" ]; then

nginx_conf_path="/opt/spinnaker/config/default/nginx.conf"

if [ -f ${nginx_conf_path} ]; then

cp ${nginx_conf_path} /etc/nginx/nginx.conf

fi

fi

fiat.yml: |-

server:

port: ${services.fiat.port:7003}

address: ${services.fiat.host:localhost}

redis:

connection: ${services.redis.connection:redis://localhost:6379}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

hystrix:

command:

default.execution.isolation.thread.timeoutInMilliseconds: 20000

logging:

level:

com.netflix.spinnaker.fiat: DEBUG

front50-armory.yml: |

spinnaker:

redis:

enabled: true

host: redis

front50.yml: |

server:

port: ${services.front50.port:8080}

address: ${services.front50.host:localhost}

hystrix:

command:

default.execution.isolation.thread.timeoutInMilliseconds: 15000

cassandra:

enabled: ${services.front50.cassandra.enabled:false}

embedded: ${services.cassandra.embedded:false}

host: ${services.cassandra.host:localhost}

aws:

simpleDBEnabled: ${providers.aws.simpleDBEnabled:false}

defaultSimpleDBDomain: ${providers.aws.defaultSimpleDBDomain}

spinnaker:

cassandra:

enabled: ${services.front50.cassandra.enabled:false}

host: ${services.cassandra.host:localhost}

port: ${services.cassandra.port:9042}

cluster: ${services.cassandra.cluster:CASS_SPINNAKER}

keyspace: front50

name: global

redis:

enabled: ${services.front50.redis.enabled:false}

gcs:

enabled: ${services.front50.gcs.enabled:false}

bucket: ${services.front50.storage_bucket:}

bucketLocation: ${services.front50.bucket_location:}

rootFolder: ${services.front50.rootFolder:front50}

project: ${providers.google.primaryCredentials.project}

jsonPath: ${providers.google.primaryCredentials.jsonPath}

s3:

enabled: ${services.front50.s3.enabled:false}

bucket: ${services.front50.storage_bucket:}

rootFolder: ${services.front50.rootFolder:front50}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: controller.invocations

labels:

- application

- cause

- name: aws.request.httpRequestTime

labels:

- status

- exception

- AWSErrorCode

- name: aws.request.requestSigningTime

labels:

- exception

gate-armory.yml: |+

lighthouse:

baseUrl: http://${DEFAULT_DNS_NAME:lighthouse}:5000

gate.yml: |

server:

port: ${services.gate.port:8084}

address: ${services.gate.host:localhost}

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

configuration:

secure: true

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: EurekaOkClient_Request

labels:

- cause

- reason

- status

igor-nonpolling.yml: |

jenkins:

polling:

enabled: false

igor.yml: |

server:

port: ${services.igor.port:8088}

address: ${services.igor.host:localhost}

jenkins:

enabled: ${services.jenkins.enabled:false}

masters:

- name: ${services.jenkins.defaultMaster.name}

address: ${services.jenkins.defaultMaster.baseUrl}

username: ${services.jenkins.defaultMaster.username}

password: ${services.jenkins.defaultMaster.password}

csrf: ${services.jenkins.defaultMaster.csrf:false}

travis:

enabled: ${services.travis.enabled:false}

masters:

- name: ${services.travis.defaultMaster.name}

baseUrl: ${services.travis.defaultMaster.baseUrl}

address: ${services.travis.defaultMaster.address}

githubToken: ${services.travis.defaultMaster.githubToken}

dockerRegistry:

enabled: ${providers.dockerRegistry.enabled:false}

redis:

connection: ${REDIS_HOST:redis://localhost:6379}

spectator:

applicationName: ${spring.application.name}

webEndpoint:

enabled: ${services.spectator.webEndpoint.enabled:false}

prototypeFilter:

path: ${services.spectator.webEndpoint.prototypeFilter.path:}

stackdriver:

enabled: ${services.stackdriver.enabled}

projectName: ${services.stackdriver.projectName}

credentialsPath: ${services.stackdriver.credentialsPath}

stackdriver:

hints:

- name: controller.invocations

labels:

- master

kayenta-armory.yml: |

kayenta:

aws: