11 Self-Attention相比较 RNN和LSTM的优缺点

作者:互联网

博客配套视频链接: https://space.bilibili.com/383551518?spm_id_from=333.1007.0.0 b 站直接看

配套 github 链接:https://github.com/nickchen121/Pre-training-language-model

配套博客链接:https://www.cnblogs.com/nickchen121/p/15105048.html

RNN

无法做长序列,当一段话达到 50 个字,效果很差了

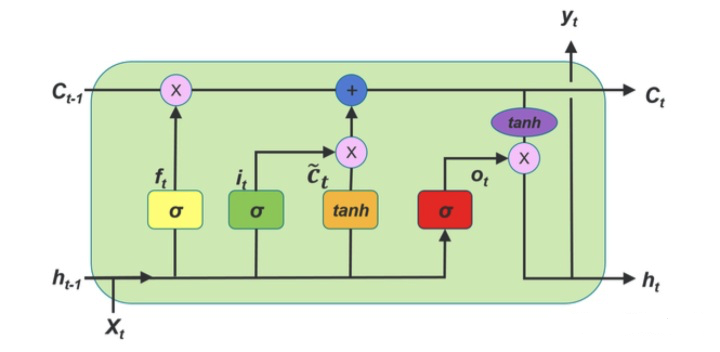

LSTM

LSTM 通过各种门,遗忘门,选择性的可以记忆之前的信息(200 词)

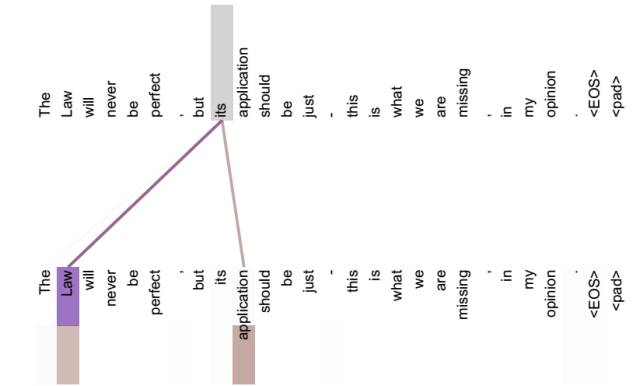

Self-Attention 和 RNNs 的区别

RNNs 长序列依赖问题,无法做并行

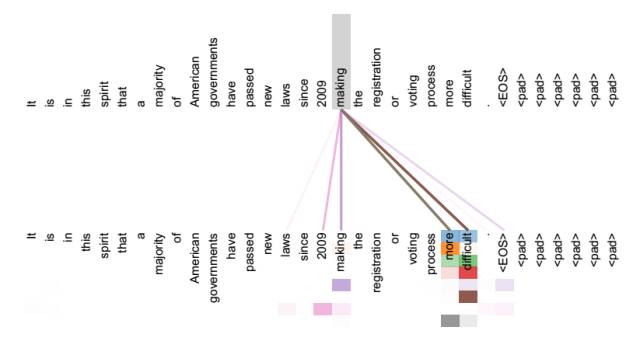

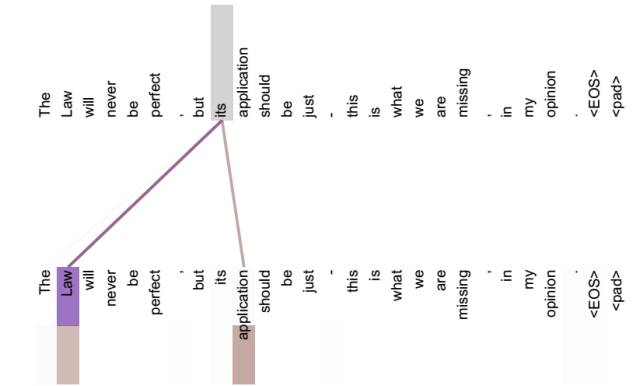

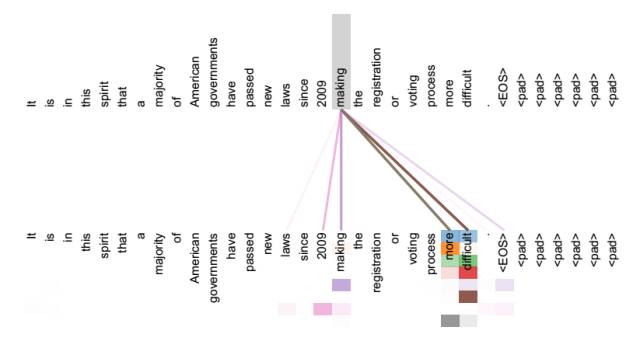

Self-Attention 得到的新的词向量具有句法特征和语义特征(词向量的表征更完善)

句法特征

语义特征

并行计算

标签:11,RNN,Self,Attention,https,LSTM,com,链接 来源: https://www.cnblogs.com/nickchen121/p/16470720.html