带你了解AKG正反向算子注册+关联流程

作者:互联网

摘要:简要介绍一下akg正反向算子的注册和关联流程。

本文分享自华为云社区《AKG正反向算子注册+关联》,作者:木子_007 。

一、环境

硬件:eulerosv2r8.aarch64

mindspore:1.1

算子注册需要编译安装框架才能生效,所以默认环境中已经有了mindspore的源码,并且已经可以编译安装

二、正向算子制作及测试

这里制作一个计算向量平方的算子

正向:y = x**2

反向:y = 2*x

先介绍正向

2.1 定义正向算子

路径:mindspore/akg/python/akg/ms/cce/,创建cus_square.py

参照同级目录下计算逻辑的定义,定义向量平方的计算逻辑

"""cus_square"""

from akg.tvm.hybrid import script

from akg.ops.math import mul

import akg

def CusSquare(x):

output_shape = x.shape

k = output_shape[0]

n = output_shape[1]

@script

def cus_square_compute(x):

y = output_tensor(output_shape, dtype=x.dtype)

for i in range(k):

for j in range(n):

y[i, j] = x[i, j] * x[i, j]

return y

output = cus_square_compute(x)

attrs = {

'enable_post_poly_loop_partition': False,

'enable_double_buffer': False,

'enable_feature_library': True,

'RewriteVarTensorIdx': True

}

return output, attrs

然后在同级目录下的__init__.py文件中添加内容

from .cus_square import CusSquare

2.2 注册算子

到路径:mindspore/ops/_op_impl/akg/ascend,创建cus_square.py,添加如下代码

"""CusSquare op"""

from mindspore.ops.op_info_register import op_info_register, AkgAscendRegOp, DataType as DT

op_info = AkgAscendRegOp("CusSquare") \

.fusion_type("ELEMWISE") \

.input(0, "x") \

.output(0, "output") \

.dtype_format(DT.F32_Default, DT.F32_Default) \

.get_op_info()

@op_info_register(op_info)

def _cus_square_akg():

"""CusSquare Akg register"""

return

然后在同级目录的__init__.py添加如下代码

from .cus_square import _cus_square_akg

2.3 定义算子原语

到:mindspore/ops/operations,新创建一个_cus_ops.py,添加如下代码

描述算子的输入:x,输出output

infer_shape:描述输出数据的shape

infer_dtype:说明输出数据的类型

x1_shape:指的是第一个输入的shape

x1_dtype:指的是第一个输入参数的dtype

import math

from ..primitive import prim_attr_register, PrimitiveWithInfer

from ...common import dtype as mstype

from ..._checkparam import Validator as validator

from ..._checkparam import Rel

class CusSquare(PrimitiveWithInfer):

"""CusSquare"""

@prim_attr_register

def __init__(self):

self.init_prim_io_names(inputs=['x'], outputs=['output'])

def infer_shape(self, x1_shape):

return x1_shape

def infer_dtype(self, x1_dtype):

return x1_dtype

然后在同目录下的__init__.py文件中添加原语信息

from ._cus_ops import CusSquare

2.4 在ccsrc中添加算子的查询信息

在mindspore/ccsrc/backend/kernel_compiler/http://kernel_query.cc的KernelQuery函数中添加如下信息

// cus_square

const PrimitivePtr kPrimCusSquare = std::make_shared<Primitive>("CusSquare");

if (IsPrimitiveCNode(kernel_node, kPrimCusSquare)) {

kernel_type = KernelType::AKG_KERNEL;

}

2.5 编译安装框架

回到mindspore根目录

bash build.sh -e ascend -j4 cd ./build/package pip install mindspore_ascend-1.1.2-cp37-cp37m-linux_aarch64.whl --force-reinstall

2.6 测试

import numpy as np

import mindspore.nn as nn

import mindspore.context as context

from mindspore import Tensor

from mindspore.ops import operations as P

context.set_context(mode=context.GRAPH_MODE, device_target="Ascend")

class Net(nn.Cell):

def __init__(self):

super(Net, self).__init__()

self.square = P.CusSquare()

def construct(self, data):

return self.square(data)

def test_net():

x = np.array([[1.0, 4.0, 9.0]]).astype(np.float32)

net = Net()

output = net(Tensor(x))

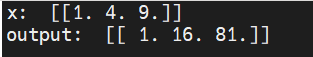

print("x: ", x)

print("output: ", output)

if __name__ == "__main__":

test_net()

输出

三、反向算子的制作和测试

3.1 制作流程

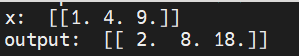

反向算子的计算逻辑:对向量元素进行求导,如 y = x^2,则求导之后 y` = 2x

实际例子就是输入向量[1, 4, 9] 输出就是 [2, 8, 18]

反向算子明明为CusSquareGrad,与前边的计算平方的算子流程相同,这里只贴一下关键代码,流程不再赘述

计算逻辑代码cus_square_grad.py

"""cus_square_grad"""

from akg.tvm.hybrid import script

import akg

def CusSquareGrad(x):

output_shape = x.shape

k = output_shape[0]

n = output_shape[1]

@script

def cus_square_compute_grad(x):

y = output_tensor(output_shape, dtype=x.dtype)

for i in range(k):

for j in range(n):

y[i, j] = x[i, j] * 2

return y

output = cus_square_compute_grad(x)

attrs = {

'enable_post_poly_loop_partition': False,

'enable_double_buffer': False,

'enable_feature_library': True,

'RewriteVarTensorIdx': True

}

return output, attrs

注册原语

class CusSquareGrad(PrimitiveWithInfer):

"""

CusSquareGrad

"""

@prim_attr_register

def __init__(self):

self.init_prim_io_names(inputs=['x'], outputs=['output'])

def infer_shape(self, x1_shape):

return x1_shape

def infer_dtype(self, x1_dtype):

return x1_dtype

3.2 测试

import numpy as np

import mindspore.nn as nn

import mindspore.context as context

from mindspore import Tensor

from mindspore.ops import operations as P

context.set_context(mode=context.GRAPH_MODE, device_target="Ascend")

class Net(nn.Cell):

def __init__(self):

super(Net, self).__init__()

self.square = P.CusSquareGrad() # 替换为grad算子

def construct(self, data):

return self.square(data)

def test_net():

x = np.array([[1.0, 4.0, 9.0]]).astype(np.float32)

net = Net()

output = net(Tensor(x))

print("x: ", x)

print("output: ", output)

if __name__ == "__main__":

test_net()

输出

四、正反向算子关联及测试

在源码 mindspore/mindspore/ops/_grad/grad_array_ops.py中添加如下代码

@bprop_getters.register(P.CusSquare)

def get_bprop_cussquare(self):

"""Generate bprop of CusSquare"""

cus_square_grad = P.CusSquareGrad()

matmul = ops.Mul()

def bprop(x, out, dout):

gradient = cus_square_grad(x)

dx = matmul(gradient, dout)

return (dx,)

return bprop

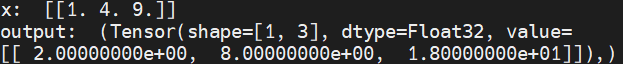

bprop函数的输入是,正向的输入x,正向的输出out,反向的梯度输入dout

上面代码的意思是指定算子CusSquare的反向梯度的计算方法,CusSquareGrad作为其中的一个函数使用

gradient = cus_square_grad(x)计算的是本平方算子的梯度,但并不能直接返回这个梯度

反向网络到该算子,最后返回的是dx,注意算子的反向梯度计算一定要放在整个网络的反向链式梯度计算中

测试

import numpy as np

import mindspore.nn as nn

import mindspore.context as context

from mindspore import Tensor

from mindspore.ops import operations as P

from mindspore.ops import composite as C

context.set_context(mode=context.GRAPH_MODE, device_target="Ascend")

class Net(nn.Cell):

def __init__(self):

super(Net, self).__init__()

self.square = P.CusSquare()

def construct(self, data):

return self.square(data)

def test_net():

x = Tensor(np.array([[1.0, 4.0, 9.0]]).astype(np.float32))

grad = C.GradOperation(get_all=True) # 计算网络梯度

net = Net()

output = grad(net)(x)

print("x: ", x)

print("output: ", output)

if __name__ == "__main__":

test_net()

输出

标签:__,square,正反,self,算子,output,import,AKG,mindspore 来源: https://www.cnblogs.com/huaweiyun/p/15596997.html